Beyond the wrapper: GenAI development services

Anyone can connect to a model API. Very few teams engineer a secure, governed generative AI system that survives production. SumatoSoft designs and builds generative AI solutions inside your secure infrastructure. You gain:

- Predictable operational cost modeling

- Seamless integration into your existing systems

- Clear ROI projection before full rollout

Why 80% of generative AI prototypes never reach production

Generative AI demos create excitement. Production environments expose operational reality.

Across industries, companies launch promising generative AI pilots, then watch them stall once real users, real data, and formal governance enter the picture. Here is where projects break.

The hallucination trap

In early demos, responses from genAI models look impressive. In production, they must be defensible.

When AI generates incorrect financial figures, misinterprets regulatory clauses, or fabricates technical details, consequences escalate quickly:

- Legal intervenes.

- Compliance blocks rollout.

- Business stakeholders lose trust.

- Executive sponsors withdraw funding.

- Confidence collapses.

Our approach: We engineer systems that operate within defined accuracy boundaries and measurable validation controls.

The security exposure problem

Prototypes often rely on public interfaces and loosely governed access. Once real teams begin using the system, sensitive information flows through it:

- Customer data.

- Financial records.

- Source code.

- Regulatory documentation.

Security reviews intensify. Risk committees intervene. Deployment pauses. The initiative stalls under scrutiny.

Our fix:

We deploy generative AI inside secure, isolated cloud environments with strict access controls and private endpoints. Your data remains inside your architecture. Your intellectual property remains protected.

The token burn crisis

A pilot used by five people can appear financially harmless. Scaling to hundreds of users turns cost into a board-level concern. Uncontrolled API usage leads to:

- Unpredictable monthly cloud bills.

- Budget overruns.

- Finance department intervention.

- Expansion freezes.

AI becomes categorized as too expensive to scale.

Our fix:

We model token usage and operational costs before production begins, optimize architecture for efficiency, and select the appropriate model for each use case so AI operates within defined financial boundaries.

Prototype success is not production readiness

A successful demo creates momentum. Production introduces:

- Security audits.

- Compliance reviews.

- Infrastructure load.

- Executive oversight.

Without governance and structured engineering, projects slow down, budgets freeze, and internal support weakens.

Our fix:

We design for production from day one by embedding governance, cost control, and measurable reliability into the architecture before scaling begins.

SumatoSoft GenAI ecosystem

As a professional gen AI development company, we design, engineer, secure, and scale GenAI systems. Every solution is production-ready, governance-controlled, and economically modeled before deployment.

RAG systems

It’s about secure chatting with your proprietary data. We build secure generative AI systems that enable teams to query internal knowledge instantly across contracts, policies, technical documentation, regulatory files, and databases.

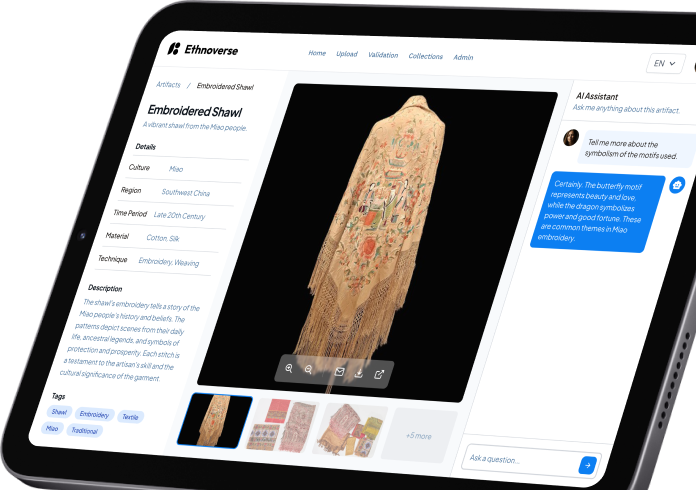

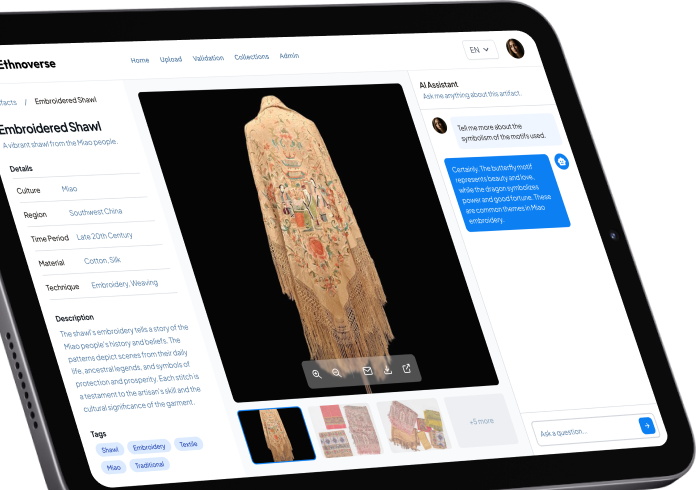

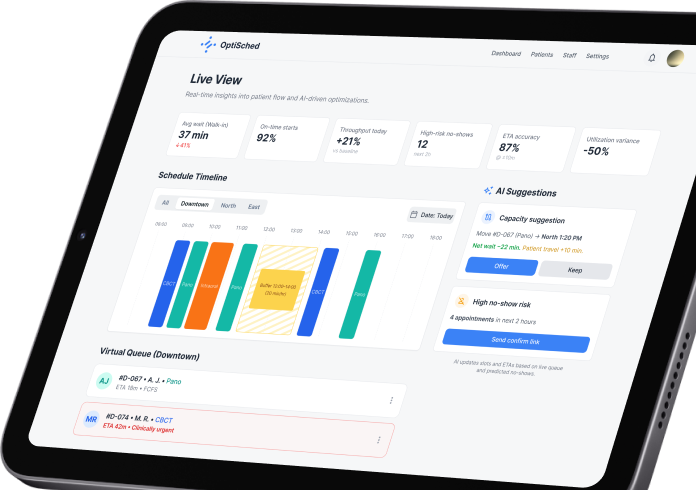

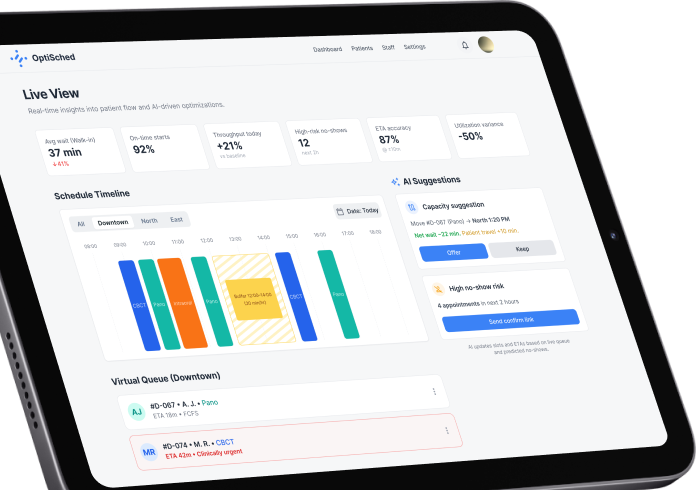

Custom copilots and AI assistants

We design AI copilots tailored to your workflows – no generic chatbots. These assistants operate inside your secure environment and connect directly to your internal tools.

Agentic workflows and autonomous systems

Move beyond text generation into operational automation. Generative AI delivers measurable value when it can take action.

LLM fine-tuning and private model customization

Your own specialized AI model. Fully controlled. When your use case requires domain precision, we customize models specifically for your industry. Your intellectual property remains fully isolated.

RAG systems

It’s about secure chatting with your proprietary data. We build secure generative AI systems that enable teams to query internal knowledge instantly across contracts, policies, technical documentation, regulatory files, and databases.

Custom copilots and AI assistants

We design AI copilots tailored to your workflows – no generic chatbots. These assistants operate inside your secure environment and connect directly to your internal tools.

Agentic workflows and autonomous systems

Move beyond text generation into operational automation. Generative AI delivers measurable value when it can take action.

LLM fine-tuning and private model customization

Your own specialized AI model. Fully controlled. When your use case requires domain precision, we customize models specifically for your industry. Your intellectual property remains fully isolated.

ROI & TCO modeling

Generative AI systems introduce new operational costs: tokens used to generate responses. When usage grows, those token costs grow with it, so we start managing these costs from the start. We calculate expected token usage before full-scale development begins.

What we calculate

Before deployment and system expansion, we estimate:

- Monthly token consumption based on expected user activity.

- Infrastructure required to support that load.

- Cost impact if usage grows.

- Total operating expense over 12–36 months.

You see the projected cost numbers before the first invoice arrives from the working system in production.

How we approach ROI

We start with the business case:

- Which workflow is being improved.

- How much time is saved.

- How often the task occurs.

- What that time costs your organization.

Then we compare the current operating cost and the projected AI operating cost. The objective is measurable economic improvement, so you know what to expect.

What you get

As a result, you will have the following staff at your table:

- Estimated monthly AI operating cost.

- Scaling forecast under growth scenarios.

- Breakeven projection.

- Clear total cost of ownership outlook.

These artifacts serve one goal: to allow you to make an informed investment decision, plan budgets confidently, and scale generative AI without financial surprises.

Book your free Gen AI discovery call

Discuss your business challenge with our Gen AI experts.

Start small: the 4-6 week pilot & prove program

To control the risk of AI initiatives with open-ended budgets and undefined expectations, we offer our 4-6 week program. Our pilot & prove program is a fixed-scope, controlled entry point designed to validate feasibility, economics, and security before full-scale deployment. It consists of 2 phases.

Before building anything, we evaluate whether your data, infrastructure, and governance model can support a production-grade GenAI system.

We assess:

- Data availability and structure.

- Security and compliance constraints.

- Integration feasibility.

- Infrastructure readiness.

- Token cost exposure.

At the end of this phase, you receive :

- A clear feasibility report.

- Risk and compliance overview.

- Architecture direction.

- Initial ROI logic.

If the projected ROI is insufficient or security constraints make the initiative non-viable, we do not move forward with development.

Once the first phase is complete and the ROI is acceptable, we move to the development phase. We design and deploy a controlled GenAI prototype inside your secure environment. The pilot includes:

- Secure architecture setup.

- RAG or copilot implementation.

- Deterministic grounding configuration.

- Token consumption modeling.

- Evaluation and red-team testing.

This is a measurable, production-aligned system.

At the end of the pilot, you receive a fully functional GenAI capability and a clear go/no-go decision framework for moving into full production.

How we engineer for zero data leakage

Generative AI should strengthen your infrastructure – not weaken it. We never route sensitive company data through consumer-grade interfaces or uncontrolled public endpoints. Every GenAI system we build is deployed inside secure, governance-controlled environments designed for compliance, isolation, and auditability.

Private, controlled deployment

We deploy models through enterprise APIs such as Azure OpenAI and AWS Bedrock, or host fine-tuned open-source models like Llama 3 or Mistral inside your private cloud or on-premise infrastructure.

- Your data never becomes training material for public models.

- Your intellectual property remains fully isolated.

Secure data indexing and retrieval

When building RAG systems, we never send raw company documents to external services. Your PDFs, databases, and internal knowledge bases are:

- Indexed locally.

- Vectorized inside your private infrastructure.

- Stored in enterprise-grade vector databases.

- Protected by strict role-based access controls (RBAC).

If a user does not have access to a document, the AI does not access it.

VPC isolation and network security

Your GenAI system operates as a mission-critical business application with defined security boundaries and infrastructure controls. Every production deployment is isolated within your virtual private cloud (VPC). We implement:

- Network-level isolation.

- Encrypted data at rest and in transit.

- API gateway control layers.

- Strict identity and access management.

Compliance-ready by design

We build systems your compliance team can confidently approve. For regulated industries such as finance, healthcare, and energy, we design architectures aligned with:

- SOC 2 requirements.

- HIPAA constraints.

- GDPR principles.

- Internal audit controls.

Our recent AI cases

GenAI engineered for your industry’s reality

Generative AI creates measurable value when it understands operational constraints, regulatory pressure, and data architecture specific to your industry. We build industry-calibrated GenAI systems that integrate directly into real workflows.

Fintech and insurance

In financial services, decisions move at the speed of regulation. Underwriters, compliance officers, and risk teams operate under constant pressure – navigating policy documents, regulatory updates, and fragmented internal data. Generative AI delivers value here when it understands both quantitative models and regulatory mandates.

Healthcare

Healthcare teams manage extensive documentation. Clinicians and administrators handle discharge notes, compliance forms, and internal protocols while patient care requires speed and precision. AI systems in this environment must improve efficiency while maintaining strict privacy protection at all times.

Logistics and supply chain

When shipments stall, revenue slows. Supply chain leaders work in environments where delays cascade, data exists in silos, and decisions must be made within minutes rather than waiting for periodic reports.

Energy and utilities

In energy and utilities, downtime represents operational risk. Engineers rely on decades of maintenance logs, compliance documentation, and technical manuals to diagnose incidents quickly and prevent escalation.

Life sciences

Research advances rapidly. Documentation progresses at a different pace. Life sciences teams navigate complex trial data, regulatory frameworks, and dense scientific literature where timely insight influences product timelines.

AdTech and media

Marketing teams generate vast amounts of campaign metrics, audience data, and performance dashboards. Insights must be extracted in time to guide the next strategic move.

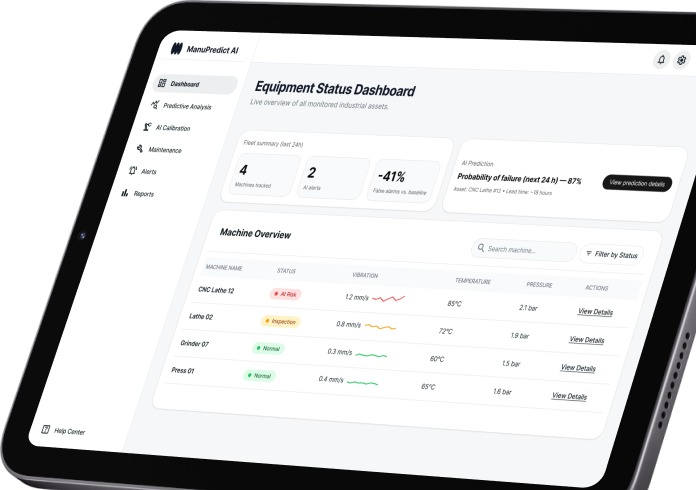

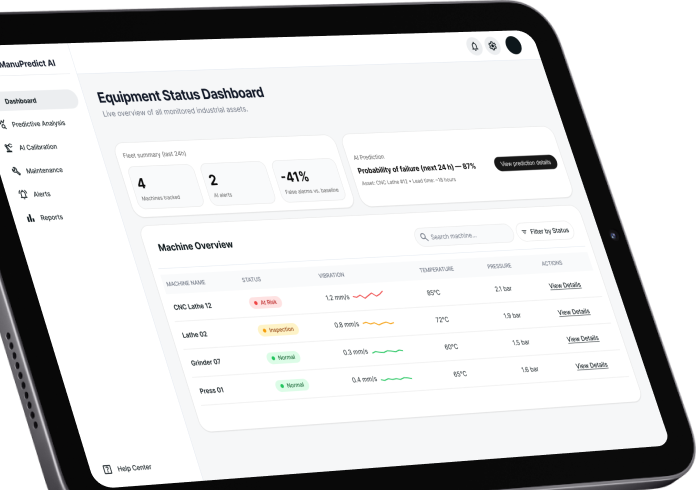

IoT and industrial systems

Factories and industrial sites produce continuous streams of telemetry. Machine logs, sensor data, and maintenance records accumulate faster than teams can review them. Operational decisions depend on accurate and timely interpretation of that data.

Fintech and insurance

In financial services, decisions move at the speed of regulation. Underwriters, compliance officers, and risk teams operate under constant pressure – navigating policy documents, regulatory updates, and fragmented internal data. Generative AI delivers value here when it understands both quantitative models and regulatory mandates.

Healthcare

Healthcare teams manage extensive documentation. Clinicians and administrators handle discharge notes, compliance forms, and internal protocols while patient care requires speed and precision. AI systems in this environment must improve efficiency while maintaining strict privacy protection at all times.

Logistics and supply chain

When shipments stall, revenue slows. Supply chain leaders work in environments where delays cascade, data exists in silos, and decisions must be made within minutes rather than waiting for periodic reports.

Energy and utilities

In energy and utilities, downtime represents operational risk. Engineers rely on decades of maintenance logs, compliance documentation, and technical manuals to diagnose incidents quickly and prevent escalation.

Life sciences

Research advances rapidly. Documentation progresses at a different pace. Life sciences teams navigate complex trial data, regulatory frameworks, and dense scientific literature where timely insight influences product timelines.

AdTech and media

Marketing teams generate vast amounts of campaign metrics, audience data, and performance dashboards. Insights must be extracted in time to guide the next strategic move.

IoT and industrial systems

Factories and industrial sites produce continuous streams of telemetry. Machine logs, sensor data, and maintenance records accumulate faster than teams can review them. Operational decisions depend on accurate and timely interpretation of that data.

GenAI stack we command

Many agencies call an API and label it “GenAI development.” We engineer full-stack, production-grade generative AI systems.

Buyers evaluate AI vendors based on architectural maturity. The tools below reflect the difference between experimental integrations and governed production systems.

How we engineer: our ADLC

Generative AI behaves differently from deterministic software. It interprets, predicts, and generates outputs.

The agentic development lifecycle (ADLC) is our engineering framework for turning probabilistic models into governed systems. Each phase addresses a specific failure point that causes most GenAI initiatives to stall.er

Before a single token is consumed, we define the economic logic.

We start with the business case.

What decision is being accelerated?

What manual workflow is being replaced?

What financial boundary makes this initiative viable?

At this stage we lock in:

- ROI expectations.

- Acceptable error thresholds.

- Data sensitivity classifications.

- Maximum token exposure.

If the economics do not work on paper, the initiative does not proceed.

Security is engineered first and embedded into the foundation.

We design the system as if it were handling regulated financial data. It includes multiple measures; here are some of them:

- Model endpoints are deployed inside your cloud perimeter.

- Vector databases are isolated.

- Access is controlled at the retrieval layer.

- Every interaction is logged and auditable.

- Consumer-grade interfaces are excluded.

- API calls are controlled and monitored.

- Data ownership is clearly defined.

This phase reduces hallucination risk.

Large language models predict plausible answers. Operational systems require verifiable answers. We enforce grounding through retrieval-augmented generation. The model is restricted to approved internal sources. If the answer does not exist in your indexed data, the system responds accordingly.

The objective of this phase is to bring traceability and verifiability to the system.

This phase is about building automation with structured control.

When the solution requires more than question-answer interactions, we design structured agent workflows. Instead of a single model generating free-form outputs, we create bounded execution chains:

- One agent retrieves.

- One agent reasons.

- One agent validates.

- One agent executes actions in external systems.

Every step operates within defined constraints. Autonomy is deliberate and governed.

The system must pass quantitative evaluation and adversarial testing before it is granted operational authority. Before deployment, the system is stress-tested. We measure:

- Context precision.

- Faithfulness to source material.

- Consistency under varied prompts.

Evaluation frameworks such as RAGAS are used to score outputs quantitatively. We then conduct adversarial testing:

- Prompt injection attempts.

- Data extraction simulations.

- Guardrail bypass scenarios.

Systems that fail validation are refined before release.

Performance must align with cost control, or the system becomes too expensive to maintain. Generative AI introduces token consumption as an operational variable that must be managed. We have established a solid approach for that:

- We simulate real-world usage volumes.

- We project monthly inference costs.

- We optimize prompt structure and retrieval size.

When appropriate, workloads are shifted to smaller fine-tuned models to reduce ongoing expense. So, financial forecasting becomes built into the architecture.

Production systems require ongoing control mechanisms. Once deployed, the system is treated as operational infrastructure.

We implement:

- Real-time usage monitoring.

- Token consumption dashboards.

- Automated re-evaluation pipelines.

- Security log auditing.

- Access control reviews.

Model behavior is re-scored periodically to detect drift. Cost thresholds are monitored against projected budgets.

Guardrails are re-tested after architecture changes. The system remains under structured supervision and never runs unattended.

How we engineer zero-hallucination systems

Legal teams block GenAI initiatives for one reason: uncontrolled outputs. We engineer systems that operate inside measurable, enforceable accuracy boundaries. Generative models are probabilistic by nature. Enterprise systems operate within defined, verifiable constraints. So we make a hallucination control a part of software architecture.

Deterministic grounding – RAG architecture

We restrict the model to retrieved, verified data only. Your documents, databases, intranet knowledge, policies, contracts, and technical manuals are securely indexed inside your private infrastructure. If the answer does not exist in approved data sources, the system is programmed to respond: “Insufficient data available.”

- No guessing.

- No fabrication.

- No invented citations.

- Every response can be source-linked and auditable.

Algorithmic evaluation before human review

We replace subjective validation with quantifiable accuracy thresholds before production approval. Before business users interact with the system, we measure it mathematically. Using structured evaluation frameworks such as RAGAS and custom scoring pipelines, we assess:

- Context precision.

- Faithfulness to source documents.

- Retrieval accuracy.

- Response consistency.

Adversarial red-teaming and prompt injection testing

Enterprise AI must withstand hostile inputs besides normal expected usage. With our approach, if the system can be manipulated into unsafe behavior, it does not pass deployment review. Before deployment, our engineers simulate:

- Prompt injection attacks.

- Data exfiltration attempts.

- Context override exploits.

- Policy bypass scenarios.

We attempt to break the system before users interact with it, ensuring it can withstand attacks.

Controlled AI

Many vendors deploy a working prototype and move directly to production, assuming issues will surface and be corrected later. In enterprise environments, that approach creates legal, compliance, and financial exposure.

We deploy governed systems with:

- Retrieval-restricted reasoning.

- Enforced response policies.

- Quantitative evaluation thresholds

- Red-team validated security controls.

- Pre-modeled token consumption limits.

The GenAI software we develop is auditable, measurable, and economically predictable.

Frequently asked questions

Will you use ChatGPT for this?

We use enterprise-grade model endpoints such as Azure OpenAI, AWS Bedrock, or privately hosted open-source models. We do not build production systems on consumer-grade interfaces. Your data is processed inside secure, controlled environments and is never used to train public models.

Can we run these models entirely on-premise?

Yes.

For organizations with strict regulatory or internal security requirements, we deploy fine-tuned open-source models such as Llama or Mistral directly within your private cloud or on-premise infrastructure.

Who owns the AI we build?

You retain full ownership of the architecture, source code, integrations, prompt frameworks, vector databases, and fine-tuned models. There is no proprietary lock-in.

How does SumatoSoft approach generative AI development?

We follow a structured engineering framework called the agentic development lifecycle (ADLC). It governs each stage of development – from business hypothesis validation and secure architecture design to deterministic grounding, evaluation, cost modeling, and production governance. Every system is engineered for security, measurable accuracy, and financial predictability.

What makes SumatoSoft the right choice for generative AI development projects?

As a professional gen AI development company, we combine advanced software engineering with governed GenAI architecture. Our systems are built for production from day one, with embedded cost controls, security isolation, deterministic grounding, and structured evaluation. We design for compliance, scalability, and measurable ROI.

Let’s start

If you have any questions, email us info@sumatosoft.com