Enterprise software development

SumatoSoft develops and updates enterprise systems for companies that value stable operation, deep integration, and the ability to expand through models. We work with key platforms, existing solutions, and internal tools. We integrate models only when they fit into processes and comply with security requirements.

Comprehensive enterprise software services

We provide enterprise software services from consulting and modernization to the development of complex systems. Where needed, we also prepare architecture, data, integration layers, and access models for AI use within existing business operations.

IT consulting

We help enterprises align technology decisions with business goals and operating constraints over the long term. Our consulting covers architecture, technology selection, integration planning, modernization priorities, and the review of AI use cases against security requirements, cost, system impact, and support burden.

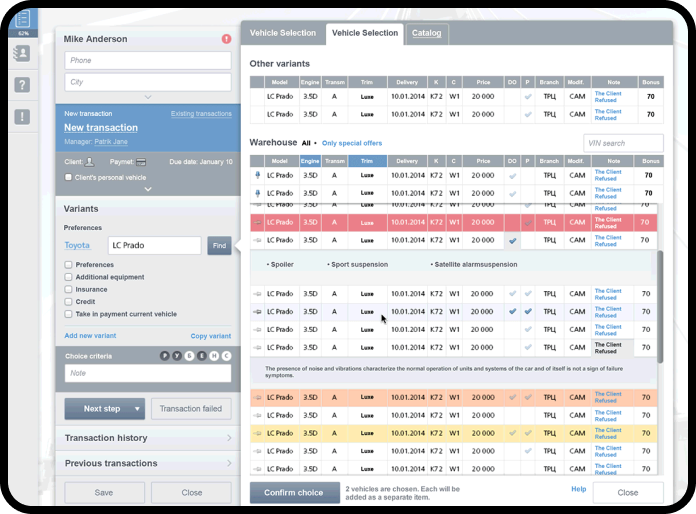

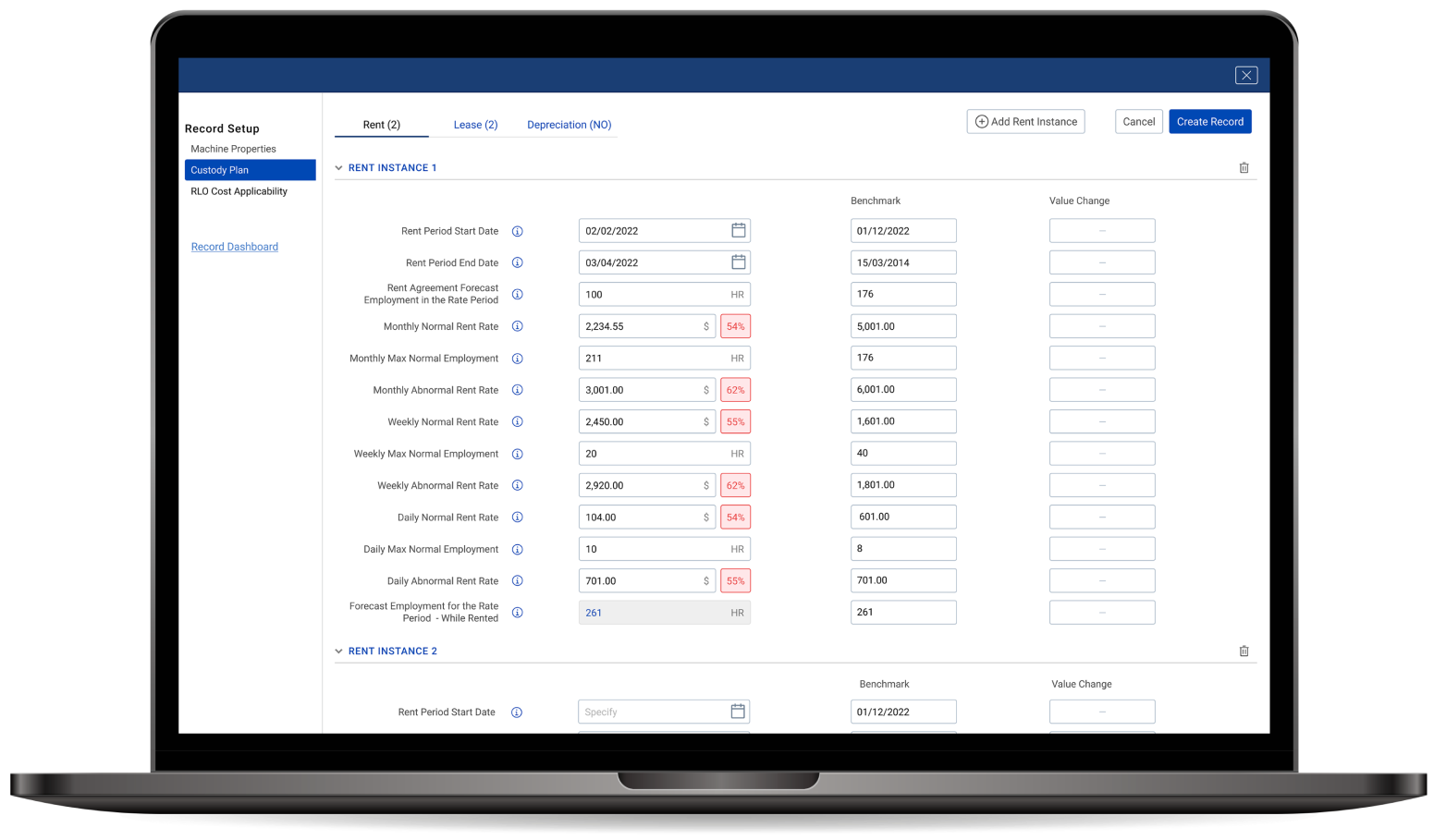

Custom enterprise software development

We design and build enterprise software for core business processes, internal operations, complex workflows, and cross-system coordination. Our systems integrate with existing environments and support long-term maintenance. When AI is in scope, we implement it within the same architecture and under the same security, permissions, auditability, and change-control rules.

Enterprise knowledge graph

We connect enterprise platforms, applications, and data sources into a shared information layer, including the pipelines, data preparation, and semantic indexing needed for enterprise search, knowledge retrieval, and AI systems that work across ERP, CRM, document storage, and other internal tools.

Legacy system modernization

We modernize legacy software by updating the architecture, reducing dependencies on outdated components, improving maintainability, and removing brittle integrations, including refactoring monoliths, defining service boundaries, exposing stable APIs, and rebuilding data flows to support new digital functions and AI use cases.

Cloud solutions

We design, migrate, and optimize enterprise cloud environments across private, public, and hybrid infrastructure. Our work covers hosting strategy, system performance, cost control, resilience, and the deployment of data-intensive or model-based services when required by the architecture.

Data management and BI

We help enterprises govern data and use it more effectively through data management and BI solutions. This includes reporting, analytics, data quality work, and the preparation of data foundations for search, recommendation, forecasting, and decision support systems.

AI starts with data readiness

You cannot point an LLM at scattered SQL tables, shared drives, scanned PDFs, and inconsistent records and expect dependable output. Before we add copilots or agents, we run a data readiness audit. We inventory the sources, remove duplicates, define metadata, map permissions, extract content from documents, and prepare the retrieval layer so the AI has a controlled foundation to work from.

- Data source inventory across systems, folders, databases, and documents

- Permission mapping so retrieval respects the same access model as your staff

- Deduplication, normalization, chunking, and metadata design

- Pilot dataset preparation with baseline retrieval and answer evaluation

Your next competitive advantage starts now

Start your custom AI enterprise software journey.

Autonomous enterprise

Companies need systems that eliminate manual work in lengthy processes and provide access to up-to-date data at the point of decision-making. We design such systems through process automation, integration, and the use of models, delivering measurable benefits in terms of speed, consistency of results, and response quality.

Workflow automation and orchestration

We build systems that route tasks between departments, trigger actions across connected platforms, and handle deviations in accordance with predefined rules. Processes remain manageable without constant manual intervention, and approvals are not interrupted by task transfers between teams.

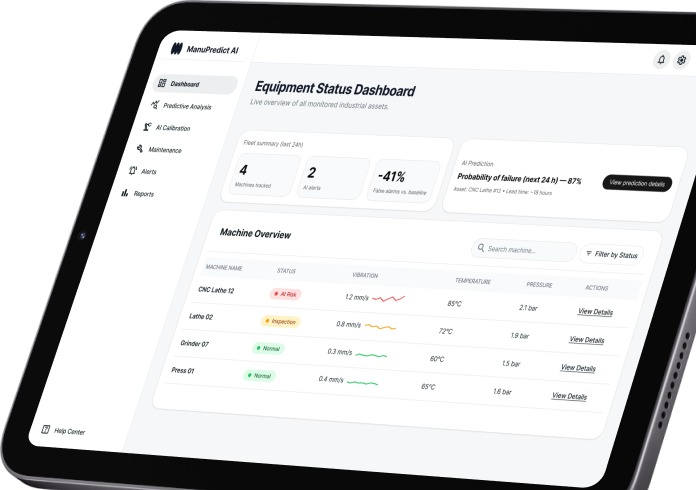

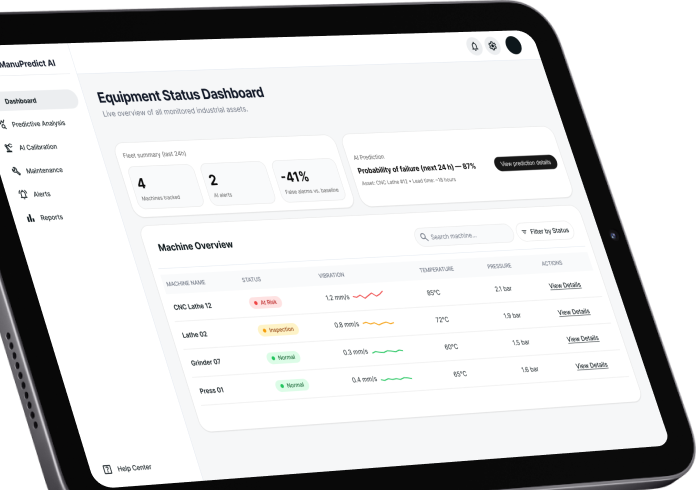

Predictive operations

We apply machine learning to forecast demand, detect failure risk, surface anomalies, and help teams intervene earlier in operational workflows.

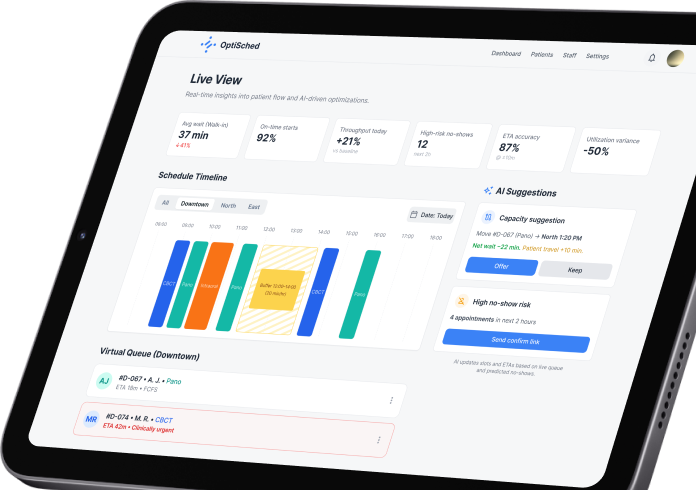

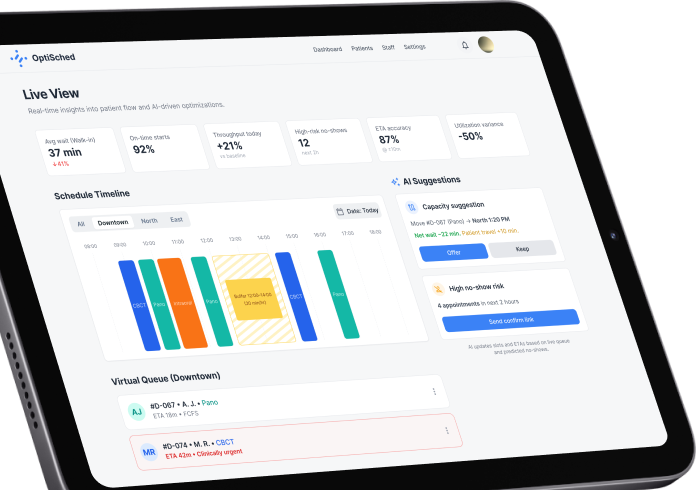

Enterprise copilots and decision support

We create internal AI tools that retrieve approved information, summarize case context, recommend next steps, and assist employees inside existing systems.

Connected operations and IoT

For businesses with equipment, devices, or field assets, we connect operational data to monitoring, alerts, maintenance logic, and service workflows.

Digital twins and simulation

Where the use case requires it, we model assets or operating environments in software to test changes, compare scenarios, and support planning without disrupting live operations.

Data platforms and operational analytics

We structure data pipelines and analytics layers that support reporting, forecasting, search, and model-backed automation across the enterprise.

Cyber-physical systems

We integrate computational algorithms with physical components, creating intelligent systems where machines and humans collaborate seamlessly for enhanced production and service delivery.

Workflow automation and orchestration

We build systems that route tasks between departments, trigger actions across connected platforms, and handle deviations in accordance with predefined rules. Processes remain manageable without constant manual intervention, and approvals are not interrupted by task transfers between teams.

Predictive operations

We apply machine learning to forecast demand, detect failure risk, surface anomalies, and help teams intervene earlier in operational workflows.

Enterprise copilots

We create internal AI tools that retrieve approved information, summarize case context, recommend next steps, and assist employees inside existing systems.

Connected operations and IoT

For businesses with equipment, devices, or field assets, we connect operational data to monitoring, alerts, maintenance logic, and service workflows.

Digital twins

Where the use case requires it, we model assets or operating environments in software to test changes, compare scenarios, and support planning without disrupting live operations.

Data platforms and operational analytics

We structure data pipelines and analytics layers that support reporting, forecasting, search, and model-backed automation across the enterprise.

Cyber-physical systems

We integrate computational algorithms with physical components, creating intelligent systems where machines and humans collaborate seamlessly for enhanced production and service delivery.

Recent works

Enterprise solution built for your industry

Healthcare

We develop enterprise systems for telemedicine, patient management, remote monitoring, and clinical data exchange. These solutions connect care processes, meet data security requirements, and provide teams with consistent information.

FinTech

We create financial systems for payments, digital wallets, trading operations, and risk management. These systems include secure transaction frameworks and internal tools. We also add anomaly detection and monitoring models when required.

Logistics and transportation

We develop systems for fleet management, route planning, supply chain monitoring, and warehouse coordination. These systems help reduce delays, balance workloads, and synchronize work between related services.

Manufacturing

We create solutions for production management, equipment monitoring, maintenance planning, and performance analysis. We integrate IoT and predictive models to monitor downtime, line load, and asset health.

Travel and hospitality

We design systems for reservations, facility management, and guest services. They support high-volume operations, connect customer data, and streamline processes across multiple locations.

Telecommunications

We create solutions for customer self-service, billing, service management, and network operations. Models are used for request routing, incident handling, and recommendations, reducing the workload on teams.

Enterprise software built on standards

We build enterprise software around security, compliance, accessibility, and audit requirements from the start. Where AI is part of the scope, we apply the same discipline to model access, retrieval, logging, and human review. Our delivery processes are backed by ISO 9001:2015 and ISO/IEC 27001:2022-certified operations, and we support projects aligned with the standards and frameworks listed below.

- GDPR

- ISO 9001:2015

- ISO/IEC 27001:2022

- HIPAA

- PCI DSS

- SOC 2

- WCAG

- OWASP

Quick playbook: selecting an enterprise development partner [pdf]

Get a free playbook that will help you find the right enterprise software development partner. No email required.

Enterprise software development approach

At SumatoSoft, we use a proven development process developed through highly complex projects. This helps us manage the scope, budget, quality, and risks at all stages. If the system includes AI components, the process is complemented by ADLC controls: data access management, model validation, release planning, and post-launch monitoring.

Project definition

We begin by defining the goals, requirements, boundaries, and expected results. We conduct stakeholder interviews and workshops to clarify business objectives and technical constraints. At this stage, we develop success metrics and a roadmap with key milestones.

Team formation

Team composition depends on the architecture, project stage, subject area, and integrations. We select specialists for specific tasks and define areas of responsibility. This reduces communication burdens and helps avoid bottlenecks.

Cost estimation

We base the estimate on the scope of work, dependencies, and deadlines. We break down tasks into the following areas: development, design, testing, and analytics. This approach aligns the budget with the actual scope of work and the agreed-upon outcome.

Risk management

We identify risks in advance and reassess them as the project progresses. We monitor technical, operational, business, and security issues. For AI functions, we additionally consider data quality, access restrictions, model result validation, and failure scenarios.

Project definition

We begin by defining the goals, requirements, boundaries, and expected results. We conduct stakeholder interviews and workshops to clarify business objectives and technical constraints. At this stage, we develop success metrics and a roadmap with key milestones.

Team formation

Team composition depends on the architecture, project stage, subject area, and integrations. We select specialists for specific tasks and define areas of responsibility. This reduces communication burdens and helps avoid bottlenecks.

Cost estimation

We base the estimate on the scope of work, dependencies, and deadlines. We break down tasks into the following areas: development, design, testing, and analytics. This approach aligns the budget with the actual scope of work and the agreed-upon outcome.

Risk management

We identify risks in advance and reassess them as the project progresses. We monitor technical, operational, business, and security issues. For AI functions, we additionally consider data quality, access restrictions, model result validation, and failure scenarios.

Documentation and knowledge transfer

We maintain working documentation throughout the project. This is necessary for onboarding, collaboration, and knowledge transfer. We use centralized repositories to ensure all information is accessible to the team. For AI projects, we describe data sources, access rules, validation logic, and system limitations.

Code review

Code is regularly reviewed. This helps maintain the system’s readability, stability, and security. We use static analysis and internal development standards, and reviews are conducted by senior engineers. In the AI area, we check model integration, query processing, data access boundaries, and system behavior during failures.

Reporting

Project progress remains transparent. The manager regularly reports on progress, deviations, and risks. We present work results in a demo at the beginning of each sprint to obtain feedback and adjust the plan.

Post-launch warranty

After the release, we remain on the project for the warranty period. We fix defects, update security components, and monitor system performance. If necessary, we will transition the project to long-term support.

Documentation and knowledge transfer

We maintain working documentation throughout the project. This is necessary for onboarding, collaboration, and knowledge transfer. We use centralized repositories to ensure all information is accessible to the team. For AI projects, we describe data sources, access rules, validation logic, and system limitations.

Code review

Code is regularly reviewed. This helps maintain the system’s readability, stability, and security. We use static analysis and internal development standards, and reviews are conducted by senior engineers. In the AI area, we check model integration, query processing, data access boundaries, and system behavior during failures.

Reporting

Project progress remains transparent. The manager regularly reports on progress, deviations, and risks. We present work results in a demo at the beginning of each sprint to obtain feedback and adjust the plan.

Post-launch warranty

After the release, we remain on the project for the warranty period. We fix defects, update security components, and monitor system performance. If necessary, we will transition the project to long-term support.

Our expertise in tools and technologies

At SumatoSoft, we carefully select the most suitable tools, technologies, and platforms to use in our enterprise software development services. Our expertise spans various programming languages, frameworks, databases, cloud services, and more, allowing us to consider multiple architecture variants and opt for the best one rather than locking ourselves in one tech stack for all businesses.

Turn your business logic into digital

Talk to our Business Analyst

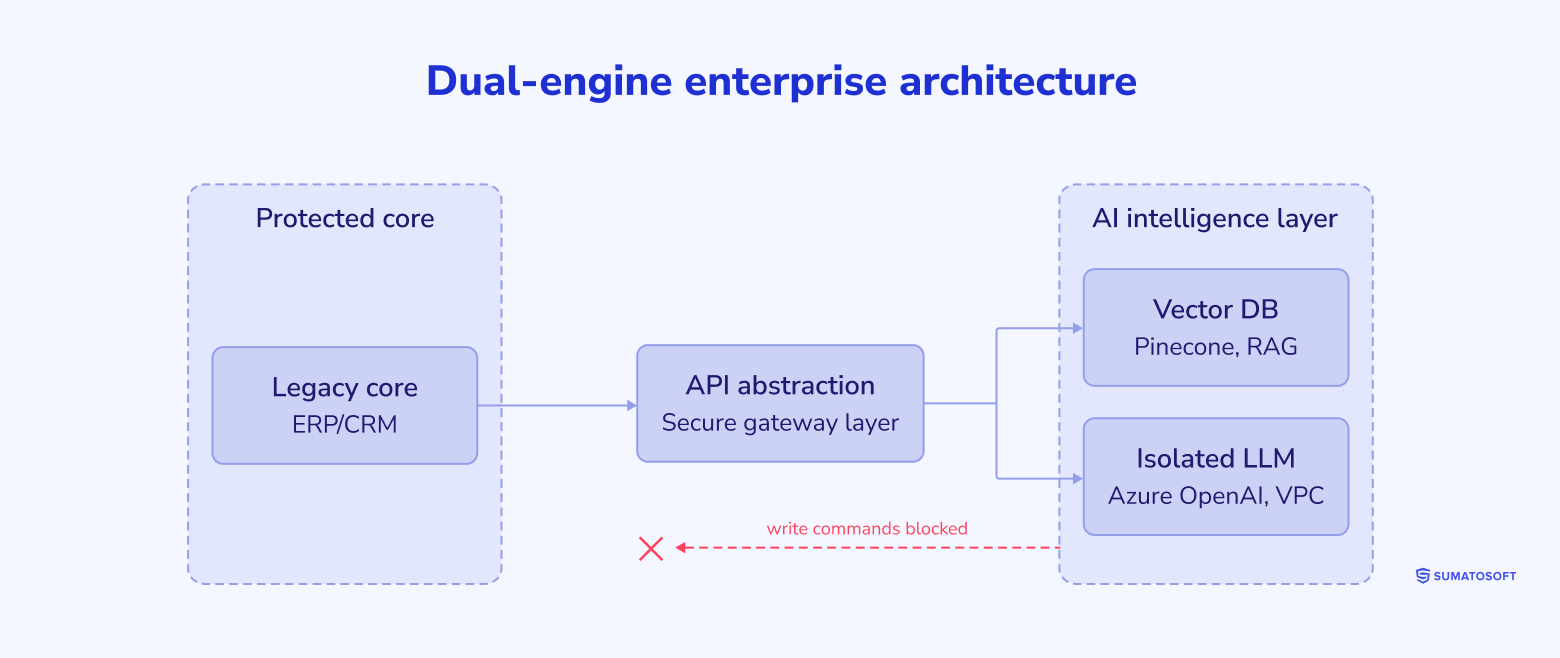

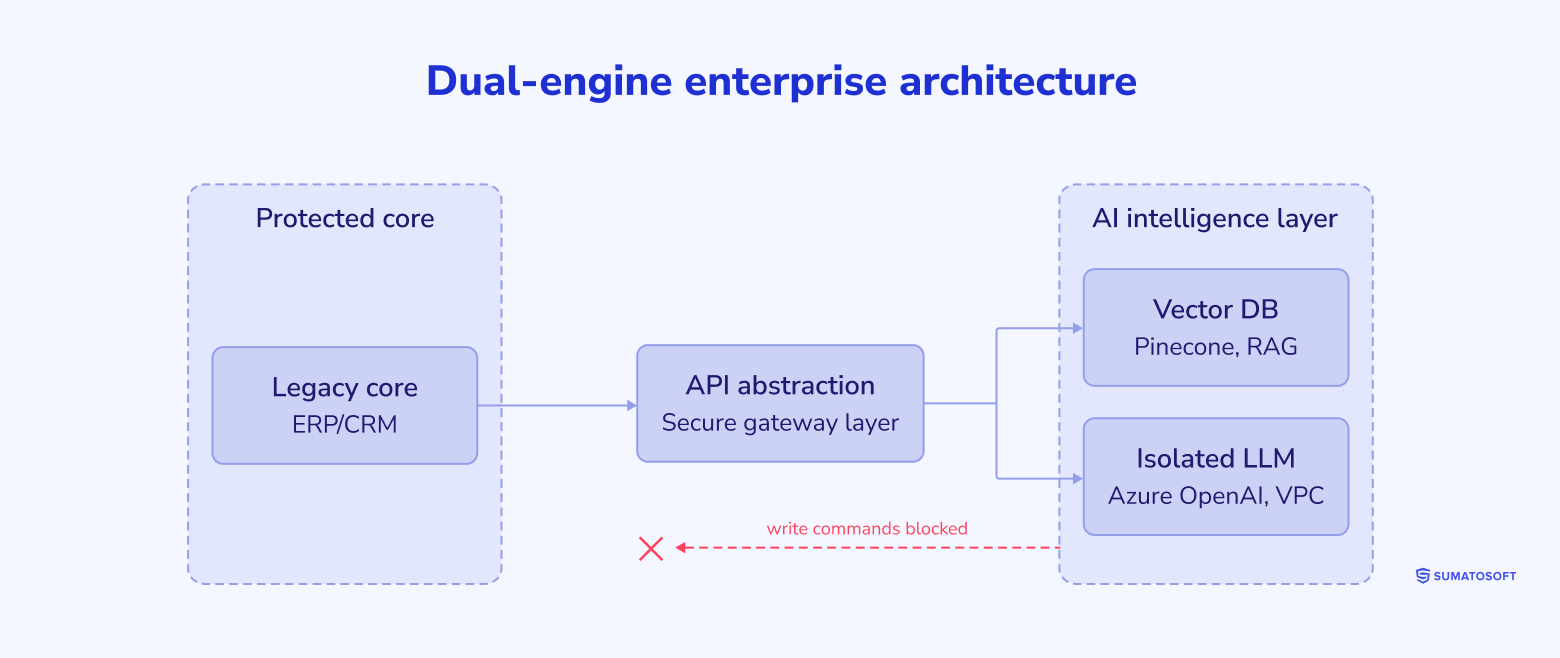

AI-first security posture

Enterprise systems usually already have perimeter security. The harder problem starts when AI touches internal data, retrieval pipelines, tool access, and business actions. We design AI-enabled systems with controls that address prompt injection, data and model poisoning, sensitive information disclosure, excessive agency, and unbounded consumption, alongside the baseline requirements for encryption, access control, logging, and recovery.

Identity and access control

We tie AI access to the same identity and permission model used across the enterprise system. The platform checks user rights before retrieval, limits what the model can access, restricts which tools it can call, and maintains tenant boundaries.

Prompt and tool security

We place policy enforcement between user input, retrieval, and every downstream action. This layer filters unsafe instructions, blocks prompt injection patterns, constrains tool execution, validates outputs before they reach other systems, and routes higher-risk actions to human review.

Data integrity and retrieval security

We protect the data layer that feeds AI features. This includes source validation, document provenance checks, indexing controls, poisoning detection, and isolation between retrieval services and core records to prevent untrusted content from shaping model behavior unchecked.

Model runtime and network boundaries

We keep model services, vector stores, and core systems in controlled network segments with private connectivity where required. Write actions do not pass straight from the model to the database. They move through governed APIs, business rules, approval logic, and audit logs.

Secure delivery and observability

Security controls are built into delivery pipelines and runtime monitoring. We log prompts, retrieved sources, model responses, tool calls, and permission decisions so teams can investigate failures, review system behavior, and support audit requirements.

Security baseline

Alongside AI-specific controls, we still apply the standard protections enterprise software requires: encryption in transit and at rest, secret management, secure CI/CD, backup policies, and operational monitoring.

Custom software that scales as you grow

Discuss your requirements with our team

Benefits custom enterprise software

With custom enterprise application development, we help our clients streamline business processes across manufacturing, procurement, services, sales, finance, and HR by building complex, customizable, scalable, and secure ERP systems.

Forecasting and decision making

We support management with timely data for planning and decision-making. Where relevant, we strengthen these systems with AI and machine learning for forecasting, anomaly detection, and pattern analysis.

Business processes automation

We automate business operations, including payment flows, manufacturing processes, and internal workflows. For businesses that use connected devices, we also apply IoT to track events and automate process steps across the operation.

Data centralization & integration

We connect departments, teams, and business systems so data can move more consistently across the organization. This improves coordination, visibility, and process efficiency.

Improved data safety & security

We help protect enterprise data through centralized access control, consistent security policies, and controlled user permissions across the system.

Collaboration management

We build tools that support coordination across teams and business units, including project management systems, video conferencing tools, messaging platforms, and other internal collaboration software.

ERP systems optimization

We help clients streamline business processes across manufacturing, procurement, services, sales, finance, and HR by building ERP systems that are customizable, scalable, secure, and aligned with the way the business operates.

What makes SumatoSoft a reliable partner

- We have delivered software in 25+ countries and across multiple business domains.

- We focus on long-term cooperation with average client engagement running 3+ years.

- We work transparently and keep delivery visible.

- When AI is part of the system, we add ADLC controls to the delivery process: standard enterprise software follows established engineering and QA practices. For the AI scope, we extend that process with ADLC so that architecture, evaluation, cost control, access governance, and production behavior are handled in a structured way.

Let’s start

If you have any questions, email us info@sumatosoft.com

Frequently asked questions

How do you integrate Generative AI into an on-premise legacy system without using public cloud APIs?

We can deploy the AI layer within private infrastructure rather than routing requests through public endpoints. In regulated environments, that may mean self-hosted open-source models, isolated networking, private gateways, and enterprise middleware that keeps the data path inside your environment.

How do you protect tenant data when AI features are added to a large enterprise platform?

Access control must be enforced before retrieval occurs. We map the user’s identity and permissions to the retrieval layer so the model can only receive content the user is already allowed to view. In multi-tenant systems, that also means tenant isolation in storage, indexing, and logging.

Our monolith already struggles under load. Will an LLM make it worse?

It will if the AI workload is pushed through the monolith itself. We usually separate the AI-heavy workflow into its own service and let it run asynchronously, so the core application is not forced to carry model latency, retrieval calls, or long-running agent logic.

How do you test an enterprise system when AI outputs are not identical every time?

We do not rely solely on pass-fail checks. We combine standard QA with retrieval tests, guarded evaluation datasets, and model-specific metrics to track whether the system stays grounded, permission-safe, and useful after every release.

Should we fine-tune a model on our enterprise data or use RAG?

In most enterprise cases, we start with RAG. It is easier to update, easier to govern, and better suited to data that changes often. We consider fine-tuning when the task depends on proprietary reasoning patterns, strict output formats, or domain-specific behavior that retrieval alone cannot provide.