Chat with your enterprise data. RAG development.

We build secure, production-grade Retrieval-Augmented Generation systems that let your teams chat with proprietary data without hallucinations or data leakage.

RAG transforms your data into leverage

Your company already has the information it needs. The risk comes from fragmented sources, inconsistent versions, and delayed access to critical knowledge.

- Engineers search across legacy systems.

- Legal teams review entire contracts to locate a single clause.

- Compliance specialists manually verify policies before audits.

- Support escalates tickets because knowledge is scattered.

RAG removes search friction and shortens decision cycles. Instead of digging through files, your teams receive precise, citation-backed answers inside a secure environment.

End-to-end RAG development services

We engineer production-ready RAG systems – securely, predictably, and with measurable ROI.

Discovery & feasibility

We assess your data quality, infrastructure, security posture, and projected usage.

If RAG does not create value, we communicate that upfront.

Outcome: Architecture direction + ROI and token cost forecast.

Architecture & secure design

We design RAG systems with:

- Secure ETL and data ingestion pipelines.

- Private vector databases.

- Hybrid search and re-ranking.

- RBAC at the retrieval level.

- PII masking layers.

- VPC-isolated LLM endpoints.

Your data is vectorized privately and never trains public models.

Outcome: Secure, governed RAG blueprint.

Development & integration

We build the full stack – including retrieval architecture, data pipelines, and orchestration.

Our engineers connect your legacy ERP, CRM, SQL databases, PDFs, and intranets into a clean retrieval architecture.

Multi-modal support handles tables, charts, and scanned documents.

Continuous sync keeps knowledge up to date.

Outcome: Working RAG system integrated with real data.

Evaluation & production deployment

Before launch, we validate mathematically:

- Faithfulness – no invented answers.

- Context precision – correct document retrieval.

- Prompt-injection resilience.

- Token burn projections.

We deploy inside AWS, Azure, or private infrastructure with monitoring and cost controls.

Outcome: Production-ready RAG system with governance built in.

Start your AI journey today

Contact with our experts to discuss how trasform your data to valuable assets.

Challenges limiting RAG effectiveness

Retrieval-augmented generation can transform how organizations access knowledge. Complex environments introduce structural challenges that require deliberate engineering.

Here is what typically limits RAG effectiveness – and how we address it.

Messy, fragmented data environments

Knowledge rarely lives in clean text files. It is scattered across scanned PDFs, complex financial tables, legacy SQL databases, SharePoint folders, ERP exports, slide decks, and archived reports.

When documents are not properly parsed and structured before vectorization, retrieval quality declines and answer reliability suffers.

How we solve it

We treat data readiness as a foundational engineering phase. Before deployment, we assess, structure, and prepare knowledge so the retrieval layer operates on governed, high-quality inputs instead of raw, inconsistent documents.

Retrieval precision determines answer accuracy

Large language models generate responses strictly from retrieved context.

If the wrong paragraph is retrieved or partially relevant content is supplied, the model attempts to complete the answer. Hallucinations emerge in otherwise functioning systems.

How we solve it

We design retrieval as a controlled accuracy system with measurable evaluation criteria. Responses are constrained to verified context, and reliability is validated through structured testing before production rollout.

If the answer does not exist in your documents, the system is programmed to say so rather than generate assumptions.

Data exposure risks

Information has strict access boundaries. Many RAG implementations enforce permissions at the UI level while leaving the retrieval layer unrestricted. This creates internal data exposure risk.

How we solve it

Security enforcement begins at the infrastructure level. Access control, isolation, and governance are embedded into the retrieval architecture so the system only accesses data aligned with authenticated permissions. Proprietary data remains protected within controlled environments.

Knowledge changes daily

Contracts are revised. Policies are updated. CRM records expand. A static vector database becomes outdated quickly, reducing answer reliability over time.

How we solve it

We engineer RAG as a living system. Continuous synchronization mechanisms ensure knowledge remains aligned with the current state of business data. Your RAG system always reflects the latest version of organizational truth.

Token costs scale with usage

RAG systems operate on token-based infrastructure. At a small scale, costs appear negligible. At high usage volumes, uncontrolled retrieval depth and prompt size inflate monthly cloud bills.

How we solve it

Cost governance is incorporated into architecture planning from the outset. Usage forecasting, prompt discipline, and infrastructure optimization are evaluated before scaling decisions are made, ensuring predictable total cost of ownership.

Legacy systems are hard to connect

Knowledge often lives inside 10-15-year-old ERP systems, fragmented databases, and custom web platforms. Many AI vendors build impressive demos and encounter difficulties extracting structured data from legacy environments.

Without integration, RAG becomes a disconnected tool.

How we solve it

We approach RAG as an integration initiative. Data sources are systematically connected to retrieval infrastructure through engineered extraction and normalization workflows that align legacy systems with modern AI environments.

Messy, fragmented data environments

Knowledge rarely lives in clean text files. It is scattered across scanned PDFs, complex financial tables, legacy SQL databases, SharePoint folders, ERP exports, slide decks, and archived reports.

When documents are not properly parsed and structured before vectorization, retrieval quality declines and answer reliability suffers.

How we solve it

We treat data readiness as a foundational engineering phase. Before deployment, we assess, structure, and prepare knowledge so the retrieval layer operates on governed, high-quality inputs instead of raw, inconsistent documents.

Retrieval precision determines answer accuracy

Large language models generate responses strictly from retrieved context.

If the wrong paragraph is retrieved or partially relevant content is supplied, the model attempts to complete the answer. Hallucinations emerge in otherwise functioning systems.

How we solve it

We design retrieval as a controlled accuracy system with measurable evaluation criteria. Responses are constrained to verified context, and reliability is validated through structured testing before production rollout.

If the answer does not exist in your documents, the system is programmed to say so rather than generate assumptions.

Data exposure risks

Information has strict access boundaries. Many RAG implementations enforce permissions at the UI level while leaving the retrieval layer unrestricted. This creates internal data exposure risk.

How we solve it

Security enforcement begins at the infrastructure level. Access control, isolation, and governance are embedded into the retrieval architecture so the system only accesses data aligned with authenticated permissions. Proprietary data remains protected within controlled environments.

Knowledge changes daily

Contracts are revised. Policies are updated. CRM records expand. A static vector database becomes outdated quickly, reducing answer reliability over time.

How we solve it

We engineer RAG as a living system. Continuous synchronization mechanisms ensure knowledge remains aligned with the current state of business data. Your RAG system always reflects the latest version of organizational truth.

Token costs scale with usage

RAG systems operate on token-based infrastructure. At a small scale, costs appear negligible. At high usage volumes, uncontrolled retrieval depth and prompt size inflate monthly cloud bills.

How we solve it

Cost governance is incorporated into architecture planning from the outset. Usage forecasting, prompt discipline, and infrastructure optimization are evaluated before scaling decisions are made, ensuring predictable total cost of ownership.

Legacy systems are hard to connect

Knowledge often lives inside 10-15-year-old ERP systems, fragmented databases, and custom web platforms. Many AI vendors build impressive demos and encounter difficulties extracting structured data from legacy environments.

Without integration, RAG becomes a disconnected tool.

How we solve it

We approach RAG as an integration initiative. Data sources are systematically connected to retrieval infrastructure through engineered extraction and normalization workflows that align legacy systems with modern AI environments.

Accuracy & hallucination control framework

RAG systems fail when the model guesses.

We engineer systems that eliminate guessing. As a part of our agentic development lifecycle (ADLC), we implement measurable, enforceable accuracy controls that transform probabilistic LLMs into governed systems.

Deterministic grounding

Basic RAG retrieves approximate context. Advanced RAG retrieves precise context.

We implement hybrid search architectures combining:

- Semantic search.

- Keyword search.

- Metadata filtering.

- Re-ranking algorithms.

This ensures the model receives the most relevant paragraphs before generating an answer. The LLM operates on complete and properly ranked context.

Hybrid retrieval & re-ranking

Basic RAG retrieves approximate context. Advanced RAG retrieves precise context.

We implement hybrid search architectures combining:

- Semantic search.

- Keyword search.

- Metadata filtering.

- Re-ranking algorithms.

This ensures the model receives the most relevant paragraphs before generating an answer. The LLM operates on complete and properly ranked context.

Algorithmic evaluation – RAGAS & faithfulness scoring

We do not rely on manual spot checks. Before deployment, we use automated evaluation frameworks such as RAGAS to score system performance on measurable metrics:

- Faithfulness – Did the model strictly use the retrieved context?

- Context precision – Did the retrieval layer supply the correct documents?

- Answer relevancy – Does the response resolve the user’s query?

This allows us to mathematically validate accuracy before legal or compliance teams review the system.

Red-teaming & prompt injection defense

Production systems must withstand adversarial behavior. We deliberately stress-test the system to identify weaknesses before deployment. Before rollout, we simulate:

- Prompt injection attempts.

- Data extraction manipulation.

- Role escalation attempts.

- Guardrail bypass scenarios.

Secure & governed RAG architecture

RAG establishes control over how knowledge is retrieved and used. At SumatoSoft, we engineer retrieval-augmented generation as governed infrastructure. Every component protects intellectual property, enforces access boundaries, and ensures controlled behavior in regulated environments.

Dual-engine integration capability

Organizational knowledge rarely lives in one system. Because SumatoSoft engineers both traditional software and AI systems, we build the full bridge:

- Secure extraction from legacy ERP and SQL systems.

- Structured ingestion services.

- Normalized knowledge layers.

- Integration into modern vector infrastructure.

Isolated, production-grade deployment

Your proprietary data remains inside your cloud perimeter. We deploy RAG systems through:

- VPC-isolated infrastructure.

- Private LLM endpoints.

- Open-source model hosting in secure environments.

- Encrypted data channels.

At query time, only the relevant context is retrieved and transmitted to the model. The model processes the request within a controlled endpoint and does not retain data. Public training is excluded. Data exposure remains strictly controlled.

Governance embedded in the retrieval layer

Access control is implemented at the architectural level. Security is enforced before generation begins. The AI retrieves only what the authenticated user is authorized to access. We embed governance directly into retrieval logic, ensuring:

- User-aligned document access.

- Role-aware knowledge boundaries.

- Infrastructure-level enforcement.

- Audit-ready traceability.

Built for compliance-grade environments

We build systems that compliance teams can approve and CIOs can confidently support. Every deployment is engineered to satisfy governance requirements, including:

- Isolation boundaries.

- Encryption standards.

- Audit logging.

- Usage monitoring.

- Controlled retention policies.

PII protection & data safeguarding

Sensitive information requires structural protection. Before indexing, data can pass through controlled preprocessing layers designed to safeguard personally identifiable and regulated information. Retrieval operates within defined compliance boundaries while preserving data integrity and traceability.

Living knowledge infrastructure

Organizational knowledge changes daily. We design RAG systems as continuously aligned knowledge environments that reflect evolving policies, contracts, and operational records without manual rebuilding cycles. The system evolves together with your data.

Forecasting your AI ROI – no surprise cloud bills

A RAG system that answers correctly and consumes unlimited tokens creates financial risk. We engineer cost predictability from day one.

What we model before you scale

- Expected monthly token consumption.

- Infrastructure and vector database load.

- Scaling scenarios based on user growth.

- Cost comparison vs. current manual workflows.

You receive a projected operating cost range before full deployment begins.

How we reduce token waste

- Context compression and smart chunking.

- Hybrid retrieval to minimize prompt size.

- Re-ranking to prevent over-fetching.

- Model-size optimization per use case.

Well-architected RAG systems operate inside defined economic boundaries.

What you get

- Estimated monthly AI operating cost.

- Scaling cost forecast.

- ROI breakeven projection.

- Clear total cost of ownership (TCO) model.

AI built with financial predictability and operational control.

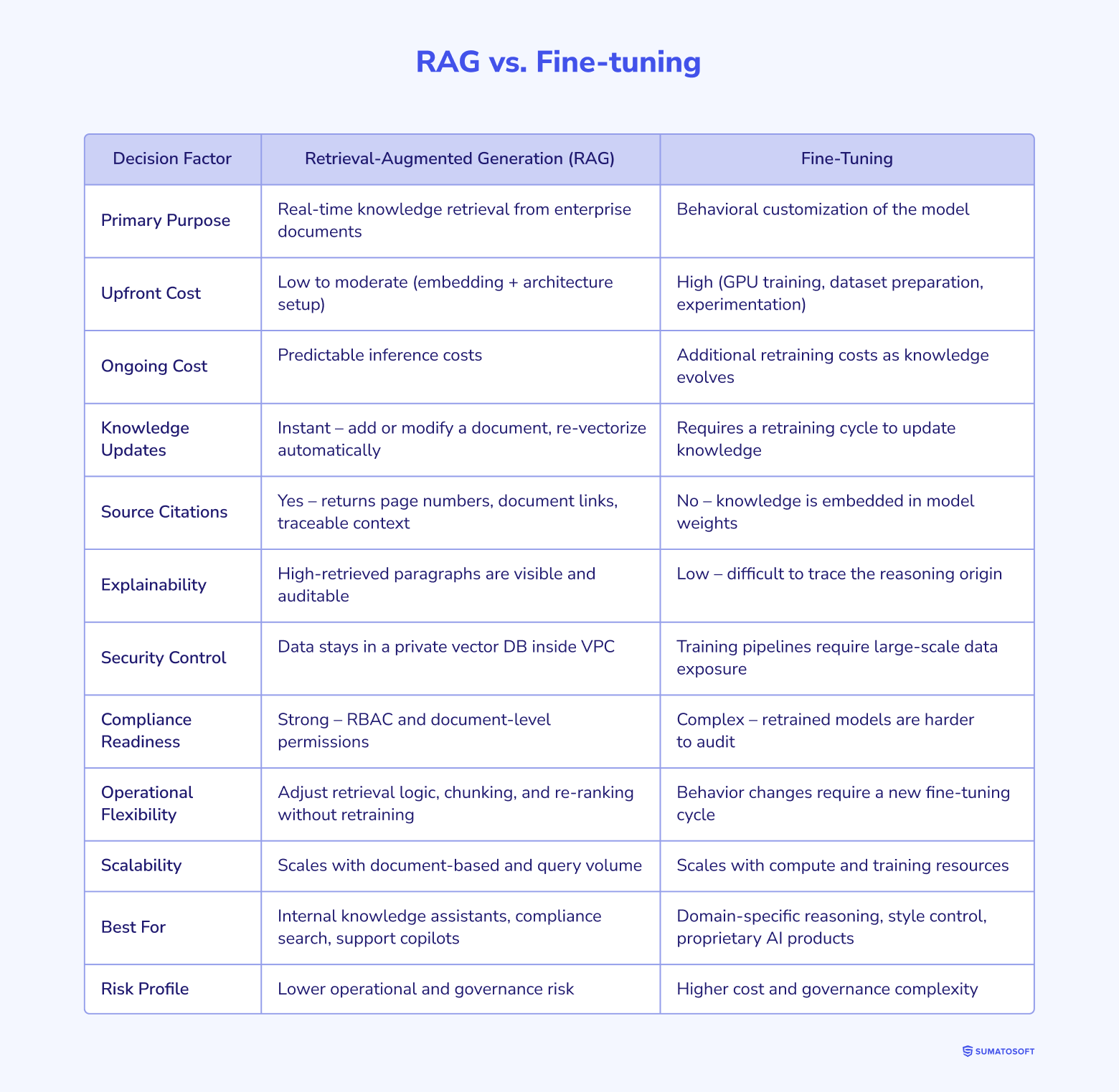

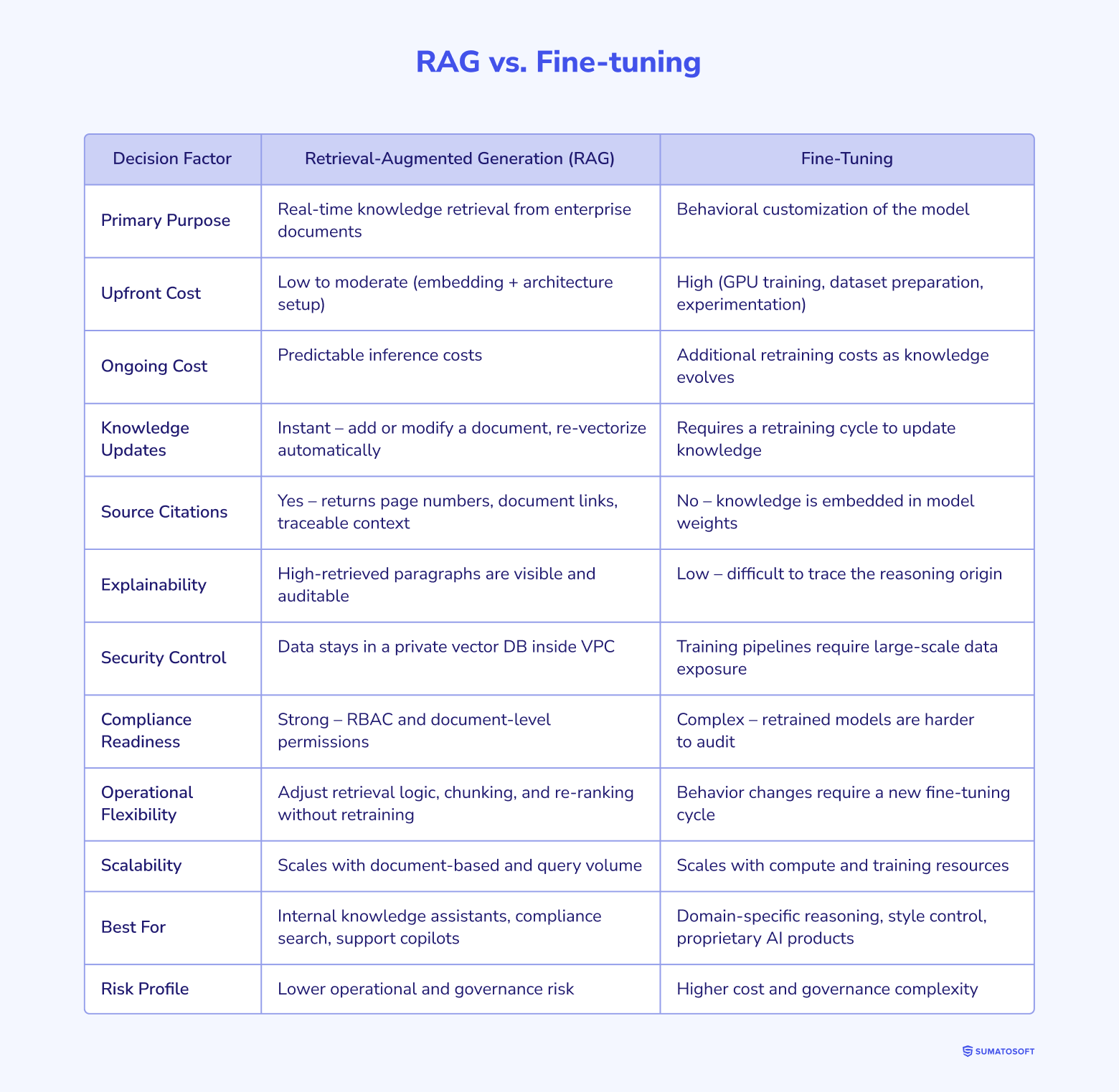

RAG vs. Fine-tuning – strategic decision matrix

For most enterprise knowledge systems, RAG delivers faster ROI, stronger governance, and lower operational risk.

Fine-tuning becomes strategically justified only when deep behavioral control or domain-specific reasoning is required.

Frequently asked questions

Can RAG understand complex tables and charts in our PDFs?

Yes. We use advanced OCR and specialized document parsing models such as Unstructured.io to ensure tables and images are vectorized correctly instead of being treated as raw text. As a professional RAG as a service provider, we help you to solve this issue.

Will the AI provide a source for its answers?

Yes. We engineer RAG prompts with deterministic grounding, forcing the LLM to output a direct link or page number to the exact internal document used to generate the answer.

Our data is scattered across scanned PDFs, legacy SQL databases, and SharePoint. Do we need to organize it before building a RAG system?

No. Handling messy data is a core part of our work. Before building the AI layer, our engineers create robust ETL pipelines – extract, transform, load. We use advanced OCR and semantic chunking to parse PDFs, accurately extract tables, and centralize the information in a clean vector database. We build both the data foundation and the AI system.

How can we trust the answers the AI gives? What happens if it hallucinates a company policy?

We eliminate hallucinations using deterministic grounding. The system prompt restricts the LLM strictly to retrieved context. If the answer is not present in your documents, the AI responds that there is not enough information to answer. Every response includes a clickable citation linking to the exact page and paragraph of the source document.

Will our proprietary contracts and internal data be sent to OpenAI and used to train public models?

No. We build RAG systems using VPC-isolated cloud architectures through AWS Bedrock or Azure OpenAI. Your data is indexed in a private vector database, and prompts are processed in a secure zero data retention environment. Your IP remains within your infrastructure and is never used to train public foundational models.

Enterprise GenAI tech stack

Talk to our AI experts

Get personalized advice for your unique project needs.

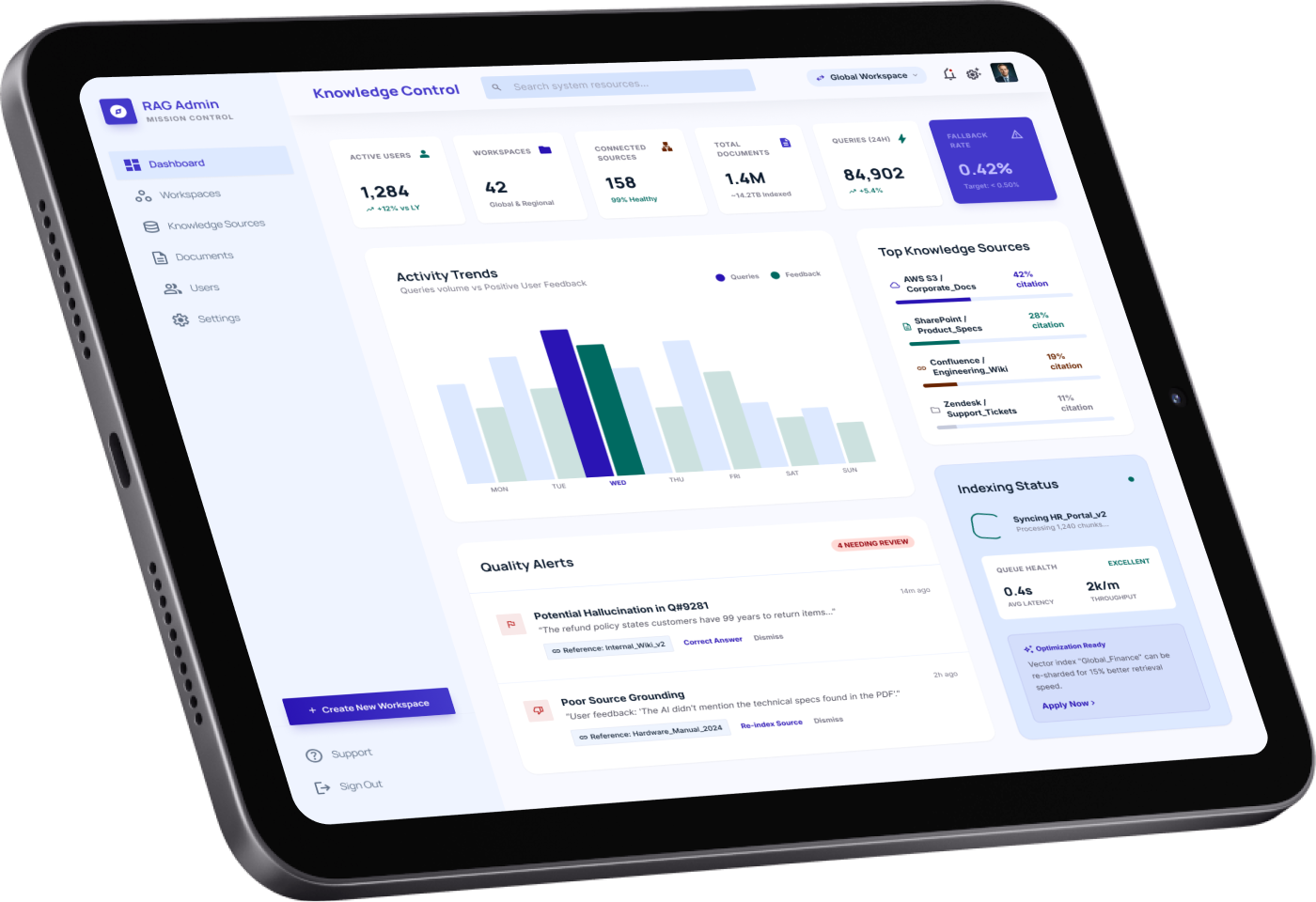

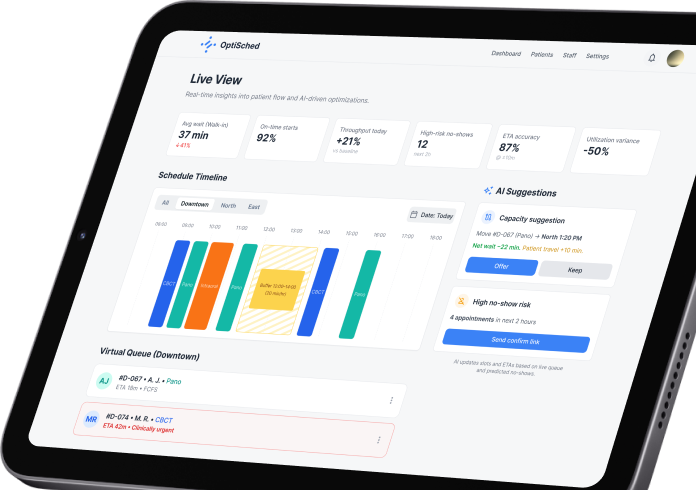

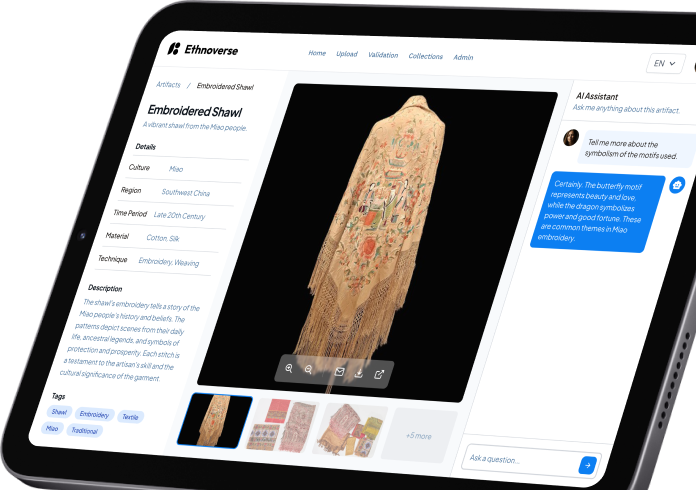

Our recent AI works

Prove the value of your data in 4 weeks

Avoid committing to a full rollout before seeing measurable results.

Our 4-week pilot & prove engagement allows you to validate technical feasibility, quantify ROI, and forecast operational token costs – before scaling to production. This is a fixed-scope, fixed-price sandbox designed to eliminate uncertainty.

We securely analyze a defined slice of your data (e.g., 500 HR documents, 1,000 support tickets, or one CRM dataset). We evaluate data quality, structure, access controls, and compliance constraints.

You receive:

- RAG feasibility confirmation.

- Data ingestion strategy.

- Security & deployment model recommendation (AWS Bedrock, Azure OpenAI, or private open-source).

We design and deploy a production-grade RAG sandbox inside a VPC-isolated environment.

This includes:

- Secure vector database setup.

- Hybrid search (semantic + keyword).

- Role-based access controls (RBAC).

- PII masking pipeline (if required).

- Deterministic grounding prompts.

Your data remains fully private. Nothing trains public models.

We measure system performance using defined evaluation criteria. Using automated evaluation frameworks (such as RAGAS), we score:

- Faithfulness – the model strictly uses retrieved documents.

- Context precision – the retrieval layer supplies the correct data.

- Answer accuracy – the output matches ground truth.

We also perform prompt-injection red-teaming to stress test security guardrails.

Before scaling, we simulate real-world usage.

Rewards & Recognitions

Let’s start

If you have any questions, email us info@sumatosoft.com