AI readiness assessment for secure, ROI-backed adoption

SumatoSoft’s fixed-scope AI readiness assessment reviews your data architecture, infrastructure, security, and ROI assumptions before you invest in development, providing a roadmap for AI adoption.

Strong AI starts with a ready foundation

Generative AI can improve search, summarization, classification, and workflow automation. To do that well, it needs structured data, clear access rules, and an environment that can support secure deployment.

That is why the AI readiness assessment comes first. Before you invest in AI software development services, a pilot or a broader rollout, it helps to confirm that your data foundation and delivery environment can support the outcome you want.

A solid foundation helps you secure:

Protected data

Sensitive information remains limited to authorized users and approved workflows.

Cost visibility

Cloud and token spend are easier to forecast before development starts.

Compliance coverage

Security, auditability, and retention requirements are built into the design from the start.

Workflow fit

AI supports the process by adding value rather than sitting atop existing inefficiencies.

Take a basic AI readiness assessment

Take a free basic AI readiness assessment

Answers each stakeholder needs before approving AI use

Technical fit

We assess whether your systems, integrations, cloud setup, and access model can support AI without creating architectural debt.

Budget clarity

We estimate the likely cost of the target use case, including infrastructure, model usage, and delivery effort.

Operational value

We identify where AI can reduce delays, remove manual work, improve throughput or be integrated into legacy software.

Security and compliance

We map the controls needed to protect sensitive data and support regulated workflows.

AI readiness assessment: what’s in it

Our AI assessment is a technical review of the four conditions that decide whether artificial intelligence can work inside your business and whether its work will be beneficial.

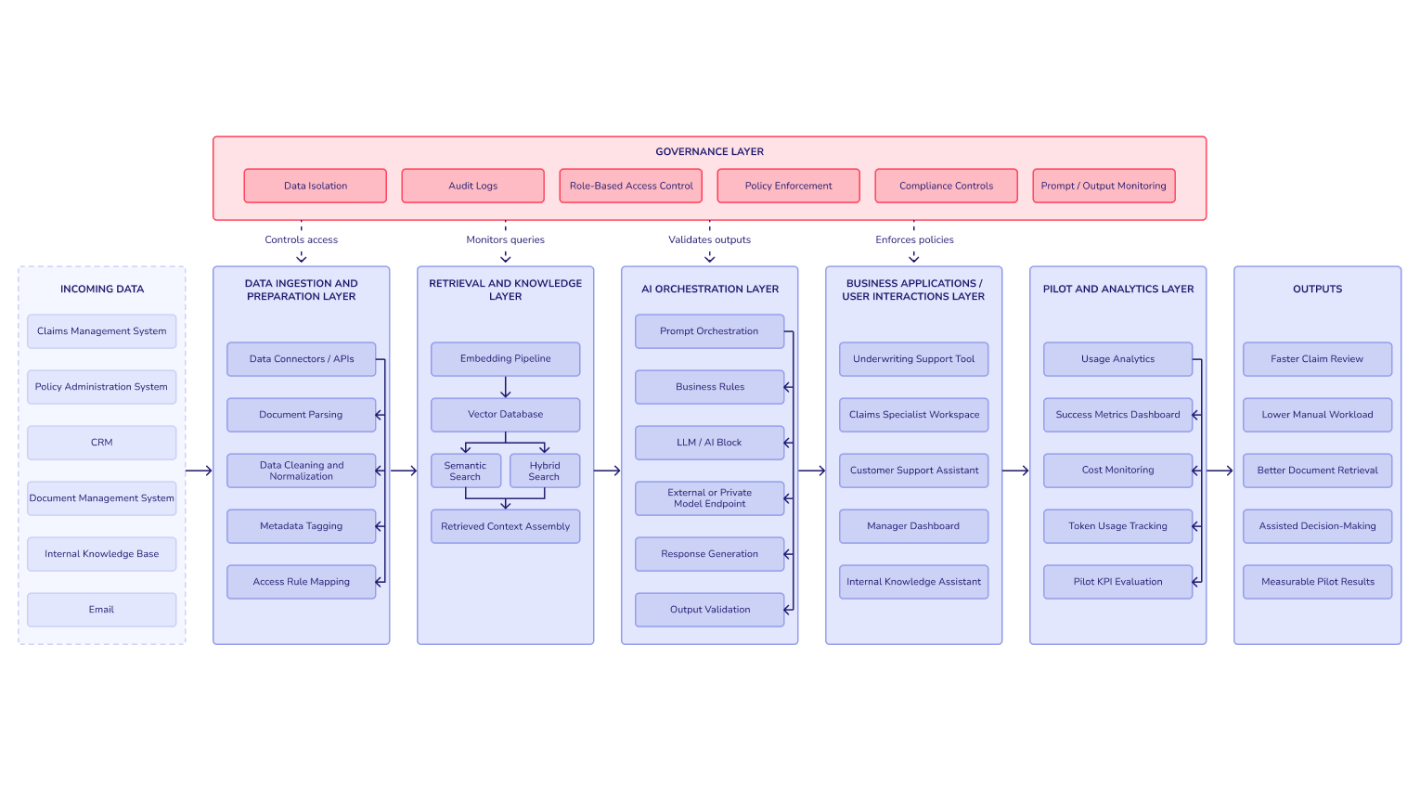

Data architecture and hygiene

We review where your data lives, how it moves, who owns it, and whether it’s usable for retrieval, classification, summarization, or agent workflows. This includes databases, SaaS exports, APIs, ETL jobs, metadata quality, and access logic. If a RAG system is the likely fit, we assess whether your environment can support chunking, indexing, embeddings, and retrieval quality.

Cloud and infrastructure readiness

We check if legacy modernization needed and possible. That includes cloud maturity, networking, observability, environment separation, secrets handling, logging, and the fit of options such as Azure OpenAI, AWS Bedrock, open-source models, or a hybrid setup.

Security and governance

We map the control model around the use case: who can see what, which data is regulated, where human approval must remain in the loop, what logs are needed for audits, which risks are acceptable, and which should block launch. Security and compliance are our core engineering concerns across AI and custom software work, including ISO 27001 and support for frameworks such as GDPR, HIPAA, SOC 2, and the EU AI Act.

Token economics and ROI

We estimate the cost to build and run the use case. That includes model calls, storage, vector database needs, hosting, monitoring, support effort, and likely growth scenarios. The goal is to see whether the business case holds before development begins.

AI Readiness Checklist

Partner with reliable AI experts to build your software.

From disconnected systems to AI-ready architecture

Disconnected systems

- Unstructured PDFs in shared drives

- Legacy ERP records with no clean API layer

- SaaS tools that do not speak to one another

- Access rights that grew over time without discipline

Teams want to add a copilot or an agent on top of this stack and hope the model will sort it out. What happens instead is uneven retrieval, wrong answers, and a serious risk of exposing data to the wrong users.

Secure AI-ready blueprint

- Source systems are mapped and prioritized

- Data moves through controlled ETL or event pipelines

- Sensitive domains are segmented

- Content is indexed with explicit ownership and retention rules

- Retrieval sits behind role-based access

- Model access is routed through a private, policy-controlled layer

- Human review stays in the workflow where risk demands it.

This is what an AI readiness assessment should produce: a clean path from source data to governed output.

Our 2-week audit timeline

We start with an NDA and a structured kickoff. Then we interview stakeholders across technology, operations, and business ownership to define the target problem and its boundaries. After that, our team runs a read-only review of your systems, data sources, integrations, and cloud setup.

We define the likely solution path, identify technical blockers, model the security boundary, and estimate cost. By the end of the second week, you receive an explicit recommendation: proceed to pilot, fix your foundation first, or solve the problem with deterministic software instead of AI.

No-hidden-agenda guarantee and deliverables

Many firms that sell AI assessments have only one path to monetization: they need your answer to be “build AI.” That creates pressure to force a use case into the wrong shape.

SumatoSoft works differently. We are a software engineering company with deep AI capability, not an AI-only shop. If the review shows that your foundation is weak, we will say so. If the target outcome is better served by deterministic software, we will say that too. If the right answer is data cleanup, integration work, or architecture modernization before any model is introduced, that will be the recommendation.

Deliverables: What you receive on day 14

- Executive readiness scorecard

A red, yellow, and green view of your data, infrastructure, security, and ROI readiness.

- Data remediation plan

A focused document that shows what must change before AI can be deployed with confidence.

- Target architecture blueprint

A high-level design for the recommended first use case, including model approach, data flow, security boundary, and integration points.

- Next-step recommendation

One of the following routes: data modernization, fixed-scope pilot, or production build planning.

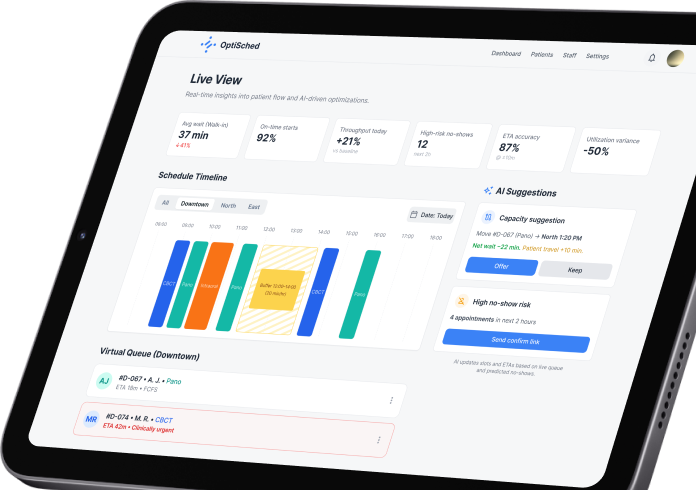

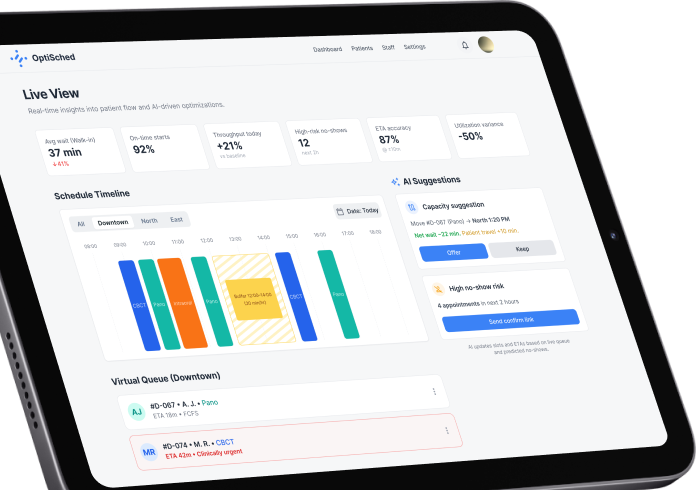

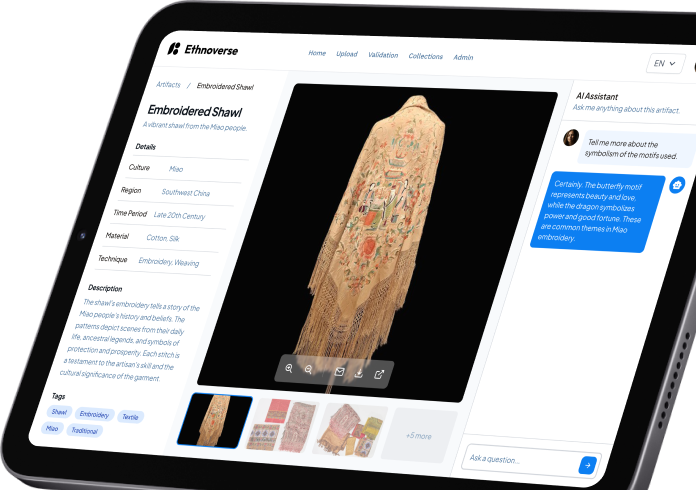

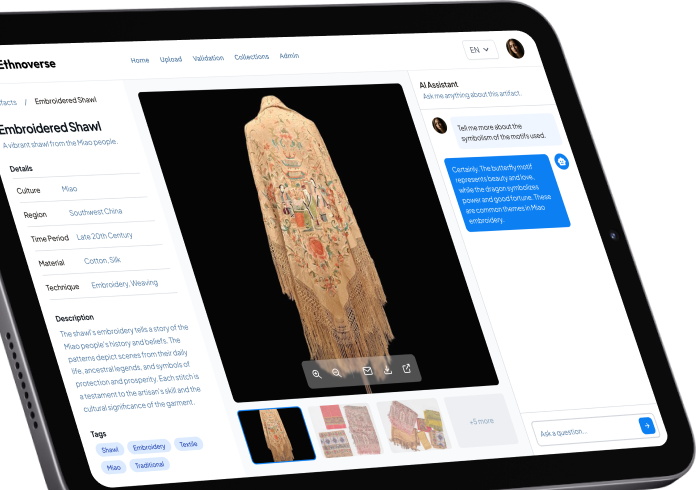

Our recent AI works

Frequently asked questions

What is an AI readiness assessment, and how is it different from an AI maturity assessment?

An AI readiness assessment answers a near-term delivery question: can this business support a given AI initiative with a fair chance of success? An AI maturity assessment is broader. It looks at how advanced your organization is across strategy, culture, governance, and enablement. Readiness concerns launch conditions, while maturity concerns longer-range capability.

Why is an AI readiness assessment important for enterprises?

Because enterprise AI lives inside constraints of data ownership, access control, compliance, integration debt, and budget discipline. All listed parameters shape whether a use case can move from idea to production. Without that review, teams often fund pilots that never scale.

Do AI readiness assessments help decide whether we should build AI at all?

Yes. A strong AI assessment should be able to say “do not build this with AI” when the use case calls for rules-based software, workflow redesign, or data modernization first.

What are the key components of an artificial intelligence assessment?

At minimum, it should cover data quality and accessibility, infrastructure and hosting fit, security and governance, and expected economics. For enterprise work, it should also define ownership, approval points, and integration limits.

What is a generative AI assessment, and how is it different?

A generative AI assessment focuses on use cases that involve LLMs, RAG, copilots, summarization, document search, content generation, or agent-like behavior. It pays more attention to retrieval quality, hallucination risk, prompt controls, model-hosting options, and token spend.

Talk to our AI Expert

Get personalized advice for your AI project needs.

What we tend to find during AI assessments: use cases

Security gaps

A company wants to connect a generic AI assistant to internal documentation. During the audit, it turns out the current permissions model would expose salary data, HR files, or legal material far beyond the intended audience. We redesign the access pattern before any model is connected.

Cost inefficiencies

Leadership assumes they need a custom model from scratch. The review shows that a narrower RAG setup, a smaller open model, or fine-tuning on a limited dataset can reach the target much faster and at a fraction of the cost.

Architecture blockers

A promising AI use case depends on data that still sits in an old on-premise system with weak integration support. The right move is to modernize data and clean up interfaces before moving on to AI pilot development.

Delivery constraints

A company’s stated needs sound like agentic AI, but the process only requires deterministic workflow software, better search, and tighter routing. We recommend a simpler stack to keep the budget in check.

Path from assessment to deployment

Let’s start

If you have any questions, email us info@sumatosoft.com