LLM development services for enterprises to control their intelligence

SumatoSoft designs and deploys LLM systems for companies that need stronger control over data, infrastructure, and model behavior. We help you choose the right path, from retrieval-based systems built on proven models to fine-tuned open-source models and proprietary model development for narrow, high-value domains.

Our LLM engineering services

Most of the model development work sits in data preparation, system design, deployment planning, and evaluation. SumatoSoft builds the full LLM delivery path, from ingestion pipelines and model adaptation to inference optimization and production rollout in your cloud or internal environment.

Data curation and pipeline engineering

Model quality depends on data quality. We build the pipelines that ingest, filter, de-duplicate, structure, chunk, and tokenize enterprise data before it reaches the model. This includes work with documents, internal records, product knowledge, support content, and domain-specific text corpora.

The goal is to make the training or retrieval layer robust: when the input data is inconsistent, outdated, or poorly structured, the model output remains consistent. We reduce that risk upstream.

Model fine-tuning with PEFT and LoRA

When prompt design and retrieval are not enough, we fine-tune open-source models for narrower tasks and stronger domain fit. We use parameter-efficient methods such as LoRA to adapt the model to your vocabulary, response format, reasoning patterns, and content rules without the cost of full-scale retraining.

This approach works well when you need a model to write in a defined format, classify domain content, extract structured information, or support internal workflows where consistency matters more than general-purpose breadth.

Custom model training

Some companies need more than adaptation. When the business case supports it, we design and train proprietary models on large internal datasets with full control over the architecture, training process, and deployment path.

This work includes training strategy, experiment design, hyperparameter tuning, distributed training orchestration, evaluation pipelines, and production preparation. We recommend this route only when the data volume and expected return justify the cost.

Inference optimization and quantization

A model has to be affordable to run after it’s built. We optimize inference so the system can operate with lower latency, lower infrastructure spend, and tighter deployment constraints. That includes quantization, model compression, serving optimization, and runtime tuning across cloud, on-premises, and edge environments.

This is often what makes an LLM system viable beyond the pilot stage. A model that performs well in testing still has to meet cost, speed, and infrastructure requirements in production.

Build vs. Buy vs. Adapt

The goal is to solve your business problem with the right level of engineering.

Tier 1. Enterprise RAG

You do not need a new model if the main issue is access to internal knowledge. In this setup, we connect your documents, records, and source systems to a secure model through retrieval pipelines, vector search, and permissions-aware access controls.

- Best fit for: Internal search, document Q&A, policy lookup, support knowledge tools.

- What you get: Faster time to value, lower model risk, and stronger grounding in enterprise data.

Tier 2. LLM Fine-Tuning

Fine-tuning makes sense when the model needs to follow your terminology, output format, and domain logic more closely than prompt design or retrieval can support. We adapt an open-source model to the task with techniques such as LoRA and other parameter-efficient methods.

- Best fit for: Medical drafting, legal review support, domain-specific copilots, proprietary coding workflows.

- What you get: More consistent outputs, tighter domain alignment, and lower compute cost than full model training.

Tier 3. Custom Pre-Training

Custom pre-training is the right path only when you have large proprietary datasets, strict deployment requirements, or a strong reason to reduce dependence on external model providers. This is the heaviest option, so we recommend it only when the business case supports the cost and complexity.

- Best fit for: Highly regulated environments, proprietary research, large-scale domain corpora, zero-dependency model strategy.

- What you get: Greater control over the model stack, tighter fit to internal data, and stronger long-term independence.

Book your free discovery call

Discuss your business challenge with our LLM development experts and find out exactly how we can solve it.

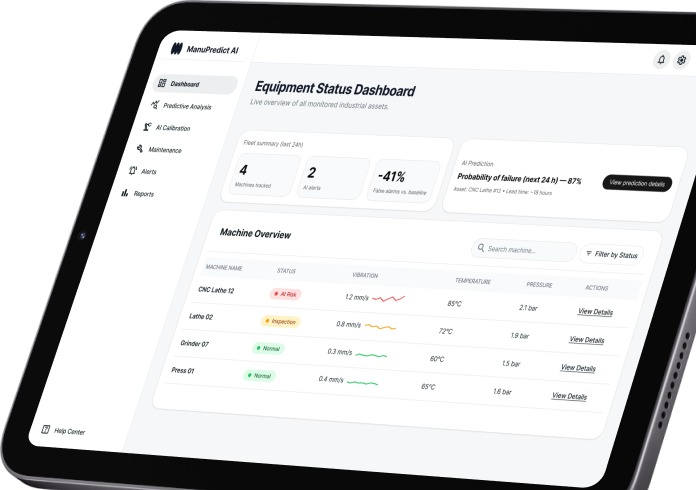

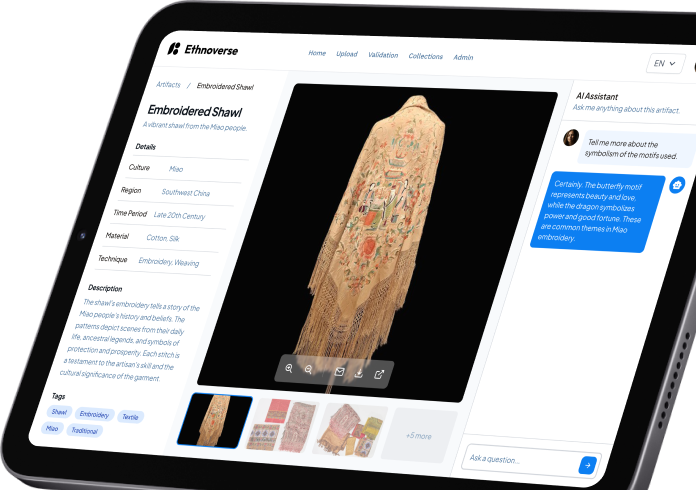

Our recent AI cases

Total cost of ownership (TCO)

External model APIs are easy to start with, but usage-based pricing can become expensive at scale. A hosted custom model can lower long-term inference cost when the workload is steady enough.

What we compare during scoping or pilot work

- API path: Token-based usage costs, vendor dependence, scaling curve, and integration overhead.

- Hosted model path: Infrastructure cost, serving setup, maintenance effort, and expected unit economics over time.

What you get

A side-by-side cost view tied to your projected usage, deployment model, and operating constraints.

Why companies choose SumatoSoft for LLM developments

Deep engineering coverage

We build the system end-to-end. That includes the data and model layers, the serving setup, and the surrounding software.e

Architecture matched to the use case

We start with the business problem, then choose the lightest architecture that can do the job well. Sometimes that means RAG. Sometimes it means targeted model adaptation. We move to a heavier build only when the case supports it.

Integration built into delivery

We treat integration as core engineering work. Our team designs LLM systems to work with older enterprise systems and newer business applications.

Deployment shaped around constraints

We deploy in private cloud, on internal infrastructure, or in local environments when the use case calls for it. The choice depends on data-handling rules, response targets, hardware limitations, and long-term costs.

Prototype your AI product

From “napkin sketch” to MVP. Our rapid development sprints help you launch an LLM-powered feature in weeks, not months.

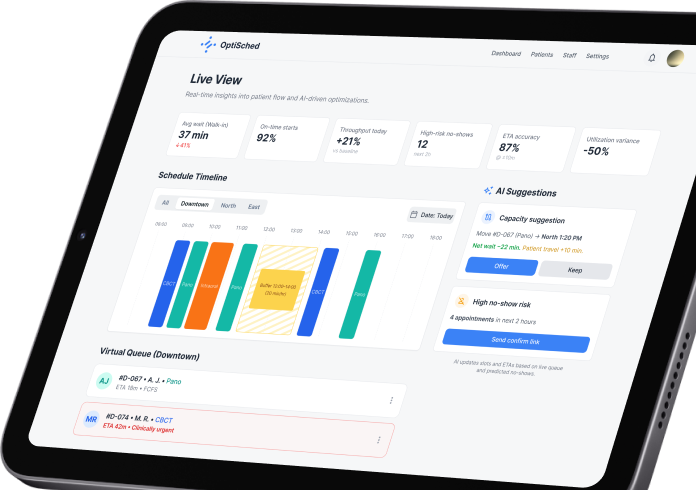

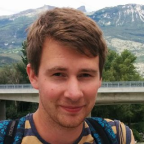

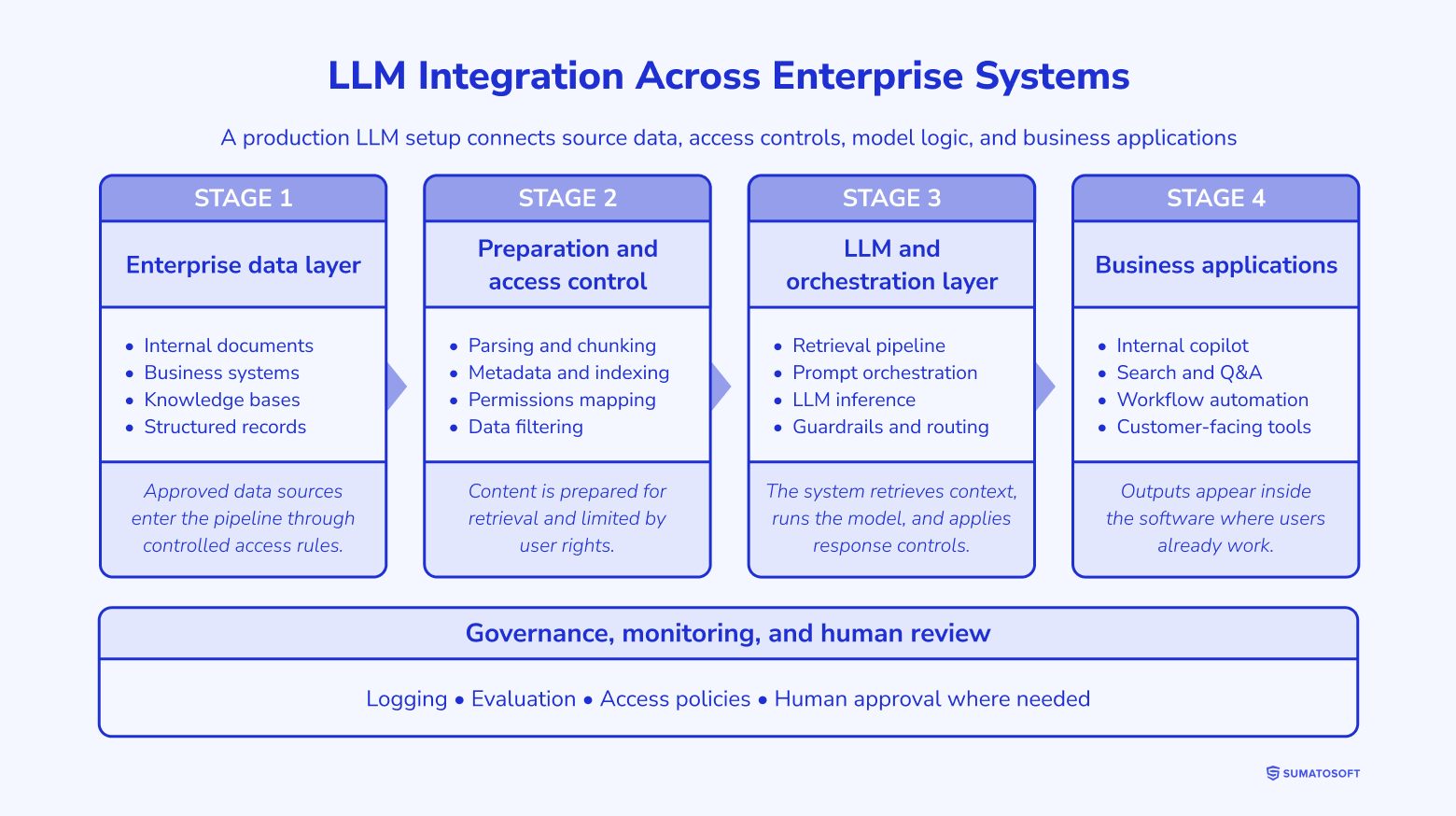

Dual-engine integration

A strong model must connect to the systems your teams already use to deliver outputs within real workflows, while respecting permissions.

Integration into modern software

We connect LLM functionality to web platforms, SaaS products, customer portals, internal dashboards, and workflow tools. That includes API integration, retrieval layers, user-facing interfaces, and orchestration logic that moves model outputs to the appropriate step in the process.

For companies building AI-enabled features into existing products, this is often the fastest route from prototype to live use.

Integration into legacy systems

We work with older ERP, CRM, document systems, internal databases, and on-premise applications that were never designed for LLM workflows. Our teams build the middleware and interface layer needed to connect those systems to the model without disrupting daily operations.

This matters when the value sits inside older platforms, but the business still needs modern AI capabilities on top of them.

Software development around the model

Some use cases need a dedicated application around the model. We build internal tools, external products, admin panels, review interfaces, and workflow systems that make LLM outputs usable in context.

That can include document review environments, support agent workspaces, knowledge tools, internal copilots, and domain-specific applications built around retrieval, generation, or classification tasks.

Access, governance, and workflow control

Enterprise LLM systems need access boundaries, auditability, fallback logic, human review points, and system-level controls. We build these controls into the application and integration layer so the model fits your operating environment.

Integration into modern software

We connect LLM functionality to web platforms, SaaS products, customer portals, internal dashboards, and workflow tools. That includes API integration, retrieval layers, user-facing interfaces, and orchestration logic that moves model outputs to the appropriate step in the process.

For companies building AI-enabled features into existing products, this is often the fastest route from prototype to live use.

Integration into legacy systems

We work with older ERP, CRM, document systems, internal databases, and on-premise applications that were never designed for LLM workflows. Our teams build the middleware and interface layer needed to connect those systems to the model without disrupting daily operations.

This matters when the value sits inside older platforms, but the business still needs modern AI capabilities on top of them.

Software development around the model

Some use cases need a dedicated application around the model. We build internal tools, external products, admin panels, review interfaces, and workflow systems that make LLM outputs usable in context.

That can include document review environments, support agent workspaces, knowledge tools, internal copilots, and domain-specific applications built around retrieval, generation, or classification tasks.

Access, governance, and workflow control

Enterprise LLM systems need access boundaries, auditability, fallback logic, human review points, and system-level controls. We build these controls into the application and integration layer so the model fits your operating environment.

Flexible deployment

We base deployment decisions on data sensitivity, latency targets, hardware limits, and long-term operating cost.

Private cloud deployment

We deploy the model inside your private cloud environment (AWS, Azure, or Google Cloud) and align it with your internal security model. The setup includes isolated infrastructure, role-based access controls, monitoring, and the service layer that connects the model to your systems.

Best fit for: Companies that need stronger control over data handling and runtime setup without moving the full workload onto internal servers.

On-premises deployment

When internal policy or system constraints require local hosting, we adapt the model to run on your own infrastructure. This work covers inference tuning, capacity planning, containerized rollout, and support for internal networking requirements.

Best fit for: Organizations that need tighter control over where the model runs, how data is stored, and how the serving environment is managed.

Edge and local inference

Some LLM use cases need to run close to devices, equipment, or field operations where connectivity is limited or response time matters. In these cases, we reduce model size through quantization and runtime optimization, enabling inference to occur locally on constrained hardware.

Best fit for: Factory environments, mobile workflows, offline field tools, and device-level assistants

Technology stack

The stack changes with the scope and model path. These are the tools and platforms we use most often in LLM projects.

Who builds the system

LLM delivery takes data engineering, infrastructure design, software integration, and production oversight. For this, our engineering team employs:

Data architects

NLP and ML engineers

LLMOps specialists

Software engineers

Our ADLC process

We use the Agentic Development Lifecycle to move from business needs to production in controlled stages. AI helps us speed up analysis, draft parts of the solution, generate code scaffolds, and expand test coverage. Our engineers review, edit, and validate that work before it moves forward.

We map the business task, target users, success criteria, and operating constraints. AI may help summarize source materials or group requirements, but our team sets the final scope and delivery plan.

We assess source data, access rules, software dependencies, and deployment limits. AI can help process large volumes of content and surface patterns. Our engineers verify the findings and choose the right path for the project.

We design the architecture, then build the data pipelines, model layer, serving setup, and integrations. AI may assist with code drafts, documentation drafts, and test generation. Developers revise that output and harden it for production use.

We test output quality, failure handling, latency, cost, and workflow fit. AI can help generate edge cases and test scenarios. Our team reviews the results, tunes the system, and adds human review steps where risk warrants them.

We deploy with monitoring, versioning, access control, and update workflows. After launch, we track system behavior, review output quality, and refine the solution as requirements change.

Frequently asked questions

Who owns the model and the related assets after delivery?

Ownership terms depend on the engagement model, but for custom LLM work, the client typically receives full rights to the delivered solution. That can include model artifacts, pipeline logic, deployment setup, and the project’s integration layer.

Do we need to invest in GPU hardware before the project starts?

Not in most cases. Training and testing can run on cloud infrastructure provisioned for the engagement. For production, we size the environment around the chosen architecture, expected traffic, and cost target. In some cases, existing on-premise hardware is enough.

How long does fine-tuning usually take?

A secure sandbox or pilot version may be available within several weeks. Broader scopes take longer because evaluation, integration, and review logic add work beyond the model itself.

How secure are LLM systems built for enterprise use?

Security depends on system design. We shape the solution around access control, data isolation, audit requirements, infrastructure boundaries, and the way the model connects to internal systems. The level of control also depends on whether the system runs in a private cloud, on internal servers, or in another managed environment.

What drives the cost of LLM development services?

Security depends on system design. We shape the solution around access control, data isolation, audit requirements, infrastructure boundaries, and the way the model connects to internal systems. The level of control also depends on whether the system runs in a private cloud, on internal servers, or in another managed environment.

Let’s start

If you have any questions, email us info@sumatosoft.com