Enterprise GenAI integration services. Zero data risk.

We integrate production-grade generative AI directly into your existing applications, workflows, and data systems. AI operates inside your infrastructure, aligned with your business logic and security requirements.

- No endless pilots

- No vendor lock-in

- Deployed, secure, measurable AI capabilities

Why most enterprise AI initiatives fail in production

You’ve seen what generative AI can do. You’ve tested use cases. You’ve explored pilots. Yet the transition from experimentation to production keeps stalling. The issue is the system around it – integration, governance, data control, and operational readiness. This is where most AI initiatives break.

The pilot graveyard

Your pilots work in controlled environments, then fail under real conditions. Production introduces fragmented data, edge cases, and compliance constraints that the pilot never accounted for. The result is a growing backlog of demos with no operational impact.

The integration iceberg

The model is only a small part of the system. The majority of effort sits in retrieval pipelines, access control, orchestration, evaluation, and monitoring. Without this foundation, AI remains disconnected from business operations.

The shadow AI problem

Teams already use generative AI – outside controlled environments. Sensitive data is shared through public tools without visibility or governance. This creates immediate exposure risks and long-term compliance issues.

The API wrapper trap

Connecting your system directly to a public LLM API creates fragile architecture. Data flows are uncontrolled, vendor dependency increases, and compliance boundaries become unclear. This approach does not meet enterprise requirements.

The hallucination liability

Generative AI produces outputs probabilistically. Without grounding and validation, responses can be incorrect or fabricated. In enterprise workflows, this leads to operational errors, reputational damage, and regulatory risk.

The big consulting bottleneck

Large consulting firms prioritize analysis before execution. Timelines extend, costs escalate, and delivery lags behind the pace of model evolution. By the time recommendations are finalized, the landscape has already shifted.

What we integrate. Where we integrate it.

Stack-agnostic. Model-agnostic. Cloud-agnostic. Built around your systems, your data, and your workflows. Our AI solutions bring intelligent assistance directly into the tools your teams rely on. These copilots generate responses, summarize information, and support decision-making within existing workflows, without introducing new interfaces.

RAG over proprietary data

Our services enable accurate, context-aware access to internal knowledge. Documents, tickets, databases, and structured data are unified into a retrieval layer that supports reliable question answering. Responses are grounded in verified company data, ensuring consistency across teams and eliminating unsupported outputs.

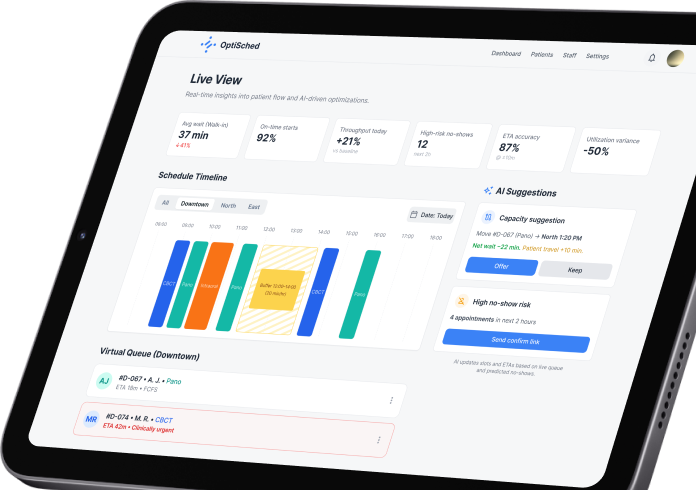

Agentic workflows

Our approach introduces AI agents that execute multi-step processes across systems. These agents retrieve data, trigger actions, generate outputs, and interact with APIs within clearly defined boundaries and approval logic.

Document intelligence

Our solutions transform unstructured documents into structured, usable information. Contracts, invoices, reports, and compliance materials are analyzed, classified, and converted into data that supports downstream systems and operational decisions.

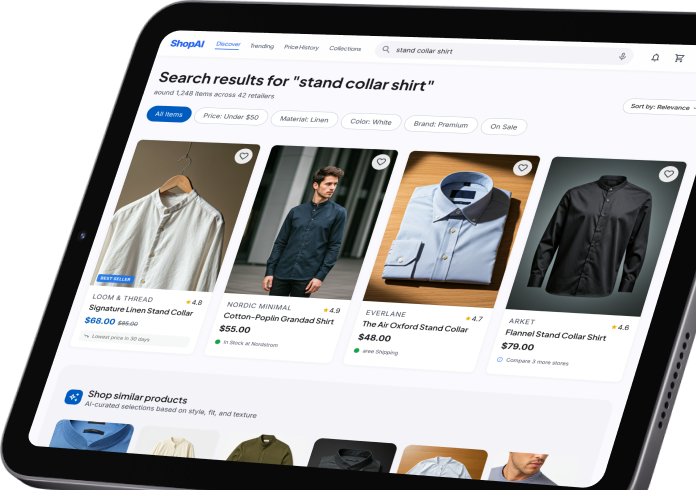

Customer-facing AI

Our integrations extend directly into your product experience. AI assistants guide users, support onboarding, provide contextual help, and personalize interactions based on real usage patterns and behavioral data.

Voice and multimodal systems

Our capabilities go beyond text-based interactions. Voice, images, documents, and recorded conversations are processed into structured insights, enabling summaries, analysis, and automated follow-ups across communication channels.

Model governance layer

Our architecture includes a centralized governance layer across all AI operations. Model selection, routing, access control, logging, and cost management are handled within a unified system, ensuring controlled and predictable behavior.

Data pipeline modernization

Our generative AI integration services establish the infrastructure required for reliable AI execution. Data pipelines, vector databases, embedding systems, and evaluation frameworks support retrieval, processing, and continuous system improvement.

Stack-agnostic statement

Our work adapts to your existing technology landscape – OpenAI, Anthropic Claude, Azure OpenAI, AWS Bedrock, Google Vertex AI, open-source models, your databases, your cloud, your identity provider. Integration is aligned with your current architecture.

Built for security teams. Approved by CISOs

We design GenAI systems the way enterprise security teams expect them to be built – controlled, auditable, and aligned with your existing governance model.

What we bring on day one

How security is enforced in practice

Designed for enterprise approval

What we bring on day one

How security is enforced in practice

Designed for enterprise approval

Dual-engine AI architecture

We engineer generative AI as part of your system architecture. Our approach in generative AI integration services introduces a controlled integration layer between your deterministic software systems and probabilistic AI models. This allows AI to operate inside your infrastructure with defined behavior, governed access, and predictable outcomes. Your existing applications, data platforms, and workflows remain the foundation. We extend them with AI capabilities that follow the same rules, security boundaries, and operational logic as the rest of your system.

Private, air-gapped LLMs

We deploy models within your controlled environment – private cloud VPCs or on-premise infrastructure. Your data does not pass through public endpoints and is never used for external model training. This ensures full control over data flow, storage, and processing.

Enterprise RAG (retrieval-augmented generation)

We connect AI directly to your verified data sources. Documents, databases, tickets, and internal knowledge are structured, indexed, and retrieved in real time. Responses are grounded in your data and constrained to approved sources, ensuring consistent and reliable outputs.

Agentic system orchestration

We build AI agents that operate across your systems. These agents can read inputs, retrieve context, make structured decisions, and execute actions through APIs. Workflows such as support handling, reporting, and internal operations become coordinated, multi-step processes.

Model-agnostic architecture

We design your system to remain independent from any single model provider. AI capabilities are routed through an abstraction layer that allows switching between models such as GPT, Claude, Gemini, or open-source alternatives without disrupting your system or workflows.

Governed execution layer

Every interaction with AI passes through a control layer. Access rights, data visibility, and allowed actions are enforced before any response is generated. Outputs are validated, logged, and monitored to ensure alignment with business rules and compliance requirements.

Evaluation and continuous control

We implement evaluation pipelines that measure response accuracy, consistency, and system performance under real conditions. AI behavior is continuously monitored and refined, allowing the system to improve while remaining stable and predictable in production.

From concept to production in 12 weeks

A structured, outcome-driven delivery model designed for real enterprise environments. Fixed phases. Clear deliverables. Full visibility from day one.

We analyze your systems, data flows, workflows, and compliance constraints to identify where generative AI creates measurable business impact.

This is a focused discovery phase. We map how AI will operate inside your existing architecture – across CRM, ERP, internal tools, and data platforms – and define exactly what is feasible within your environment.

Each opportunity is evaluated against business impact, implementation complexity and operational risk. You receive a prioritized roadmap of GenAI use cases, along with a clear execution plan and cost model, before any development begins.

Deliverables:

- Integration roadmap document

- Prioritized use case list with ROI assumptions

- Architecture outline aligned to your systems

- Token usage and cost projections

- Executive readout with go-no-go recommendations

We build the core infrastructure that allows AI to operate inside your systems in a controlled, secure, and observable way. This includes setting up your private model access layer, retrieval pipelines, evaluation framework, and governance controls. Every component is designed to integrate with your identity provider, data sources, and existing services. AI becomes part of your system architecture, governed by the same rules as your software. Security, access control, and auditability are enforced at the foundation level.

Deliverables:

- Running AI foundation deployed in your environment

- RAG pipelines connected to your data sources

- Model routing and abstraction layer

- Evaluation and monitoring framework

- Security validation and internal approval readiness

We implement the first production-grade GenAI capabilities directly into your applications and workflows. This includes copilots, internal assistants, document processing pipelines, or agent-based automations – depending on what was prioritized during the audit.

Each integration is built to function within real user workflows. AI interacts with your systems through controlled APIs, follows defined business rules, and operates within approved data boundaries. All components are tested end-to-end with real data, real edge cases, and real user scenarios.

Deliverables:

- Production-ready GenAI features integrated into your systems

- End-to-end tested workflows with real data

- User-facing interfaces or embedded components

- Evaluation reports (accuracy, reliability, performance)

- Staging environment ready for controlled rollout

We validate system behavior under production conditions and prepare for controlled rollout.

This includes load testing, adversarial testing, and evaluation of AI outputs against defined quality and compliance criteria. Any edge cases are resolved before full deployment. The rollout is phased, allowing you to introduce AI capabilities without disrupting ongoing operations.

Your team receives full documentation, operational guidelines, and the ability to scale independently. AI becomes a stable, governed part of your system.

Deliverables:

- Live production deployment

- Performance and reliability validation results

- Operations runbook and governance guidelines

- Scaling roadmap with next-phase opportunities

- Knowledge transfer to your internal teams

Our recent AI cases

FAQ

How is this different from hiring Accenture, IBM, or Deloitte?

Scope and focus. We only do GenAI integration for mid-market and enterprise – no pyramid of junior consultants, no 200-slide strategy decks, no multi-year master service agreements. You typically work directly with senior AI engineers, get to production in 90 days, and pay 40-70% less than a comparable Big Four engagement.

How is this different from a generic dev shop that added “GenAI” to its homepage last year?

GenAI in production is a specialized discipline – evaluation harnesses, retrieval tuning, prompt injection defense, observability, cost governance, model routing. Generalist shops ship demos that break the first time they hit real data. We ship systems that operate reliably with your users and your auditors.

Do we own the code?

Completely. Everything we build is your intellectual property, delivered in your repositories, running on your infrastructure. There are no ongoing license fees for the integrations we deliver.

What models and platforms do you work with?

All major foundation models (OpenAI, Anthropic Claude, Google Gemini, Meta Llama, Mistral, Cohere, and others), all major clouds (AWS Bedrock, Azure OpenAI, Google Vertex AI), and fine-tuned or open-weight models when the use case calls for them. We recommend based on your use case.

What if our data is messy?

Most clients operate with messy real-world data. Our methodology assumes this from the start. We identify what needs to be fixed, what can be handled within the system, and what may block progress. Data preparation is addressed as part of the integration process.

What production-grade GenAI integration actually delivers

Our generative AI integration services offer measured outcomes across real deployments. Built inside your systems, validated under real operating conditions.

30-70% faster workflows

Copilots, summarizers, and AI agents operate directly inside your existing tools – CRM, ERP, support platforms, and internal systems. Tasks that previously required manual coordination are executed in seconds. Time savings are measured at the level of actual work completed per employee, per week.

2-5x support deflection

Context-aware assistants retrieve answers from your internal documentation, tickets, and product data. Responses are grounded in your actual systems, reducing escalation volume while maintaining accuracy and consistency across customer interactions.

15-40% higher conversion

AI-driven personalization is embedded directly into product flows, onboarding, and content delivery. Recommendations, guidance, and interactions adapt to user context in real time, increasing engagement and conversion across key revenue paths.

100% auditable and compliant operations

Every prompt, response, data retrieval, and system action is logged, traceable, and governed. Access is role-controlled, outputs are monitored, and decisions are fully reviewable. This aligns AI behavior with enterprise compliance requirements including GDPR, HIPAA, and SOC 2.

Predictable AI cost control

Token usage, model selection, and workload distribution are engineered and modeled before scaling. Requests are routed to the most efficient models, repeated queries are cached, and cost behavior remains stable as usage grows.

Continuous system improvement

AI performance is not static. Evaluation pipelines measure accuracy, relevance, and system behavior over time. Feedback loops refine retrieval, prompts, and model selection, ensuring the system improves under real usage conditions without disrupting operations.

Why choose us

| SumatoSoft | Big consultancies | Generic dev shops | |

|---|---|---|---|

Time to production |

90 days |

9–18 months |

Unpredictable |

GenAI specialization |

100% focused |

One of many practices |

Generalists who added AI last quarter |

Security & compliance |

Enterprise-grade by default |

Enterprise-grade |

Often an afterthought |

Team composition |

Senior AI engineers only |

Heavy pyramid with juniors |

Mixed seniority |

Code & IP ownership |

Yours, day one |

Often licensed / co-owned |

Varies |

Post-launch support |

Embedded or advisory, flexible |

Fixed multi-year contracts |

Usually none |

Model flexibility |

All major + open-source |

Partnership-limited |

Limited |

Risk reversal |

Fixed-fee audit, scoped phases |

Time & materials |

Time & materials |

Deployment flexibility |

VPC, private cloud, on-prem |

Mostly cloud-first |

Limited options |

Time to production

GenAI specialization

Security & compliance

Team composition

Code & IP ownership

Post-launch support

Model flexibility

Risk reversal

Deployment flexibility

90 days

100% focused

Enterprise-grade by default

Senior AI engineers only

Yours, day one

Embedded or advisory, flexible

All major + open-source

Fixed-fee audit, scoped phases

VPC, private cloud, on-prem

9–18 months

One of many practices

Enterprise-grade

Heavy pyramid with juniors

Often licensed / co-owned

Fixed multi-year contracts

Partnership-limited

Time & materials

Mostly cloud-first

Unpredictable

Generalists who added AI last quarter

Often an afterthought

Mixed seniority

Varies

Usually none

Limited

Time & materials

Limited options

Let’s start

If you have any questions, email us info@sumatosoft.com