QA & Testing Services for Traditional and AI Software

Traditional testing fails on AI-driven software. SumatoSoft is a Dual-Engine engineering firm. We run rigorous deterministic QA (Manual, Automation, API) for your software, and apply advanced LLMOps Evals, Red-Teaming, and RAG-scoring to ensure your Generative AI features never hallucinate or leak data.

We test both code and AI

Modern software combines deterministic logic with AI-driven components. We design QA that evaluates both with clear methods, measurable criteria, and defined outcomes

Deterministic QA for software systems

We verify that your software behaves exactly as intended across all layers from individual components to complete workflows.

Our QA covers:

- Functional validation of features and business logic

- Integration and API testing across systems

- Regression control for continuous releases

- Performance and stability under real load

- Security validation aligned with industry standards

Every test is structured, repeatable, and aligned with your release criteria.

Probabilistic QA for AI systems

AI systems are evaluated through defined and measurable criteria.

We evaluate how your AI behaves in real scenarios and ensure outputs remain consistent, accurate, and aligned with your system logic.

Our AI QA includes:

- Context precision and answer consistency evaluation

- Detection of reasoning deviations across scenarios

- RAG system validation (retrieval accuracy and response quality)

- Prompt injection resistance and consistent interaction behavior

- Continuous evaluation integrated into your workflows

AI behavior is continuously evaluated, scored, and aligned with system logic.

Unified quality across your system

We align both QA approaches into a single, unified process.

- Software logic is validated

- AI behavior is evaluated

- Release readiness is defined by measurable criteria

You operate with full visibility into system quality across every component that drives your product.

Get a Free QA Quote

Discover how our tailored QA can fit your budget.

Our QA services

Our QA testing services work around how your product is built, released, and scaled.

Each engagement model defines clear responsibility, predictable execution, and measurable outcomes.

Full-cycle QA ownership

We take full responsibility for quality across your product lifecycle.

We define the QA strategy, build coverage, execute testing, manage environments, and report results in a structured way.

Your team works with one accountable partner from planning to release.

You get:

- Dedicated QA team aligned with your product

- End-to-end test coverage across systems and flows

- Structured reporting with clear quality metrics

- Release readiness based on defined acceptance criteria

QA consulting and audit

We evaluate your current QA setup and define how it should operate.

We analyze your processes, coverage, tools, and risks. Then we deliver a clear QA model aligned with your product, team, and release goals.

You get:

- Independent QA assessment

- Defined QA strategy and coverage model

- Tooling and process recommendations

- Clear roadmap for improving quality and efficiency

Managed testing

We execute testing operations under your priorities and roadmap.

You define scope and direction. We manage execution, coverage, and reporting, ensuring consistent quality across releases.

You get:

- Scalable QA team aligned with your workload

- Controlled test execution across cycles

- Regular reporting with measurable progress

- Coordination with your internal teams

QA as a service

We provide QA capacity when and where you need it.

Our engineers integrate into your team and workflows, supporting releases, sprints, or specific testing needs.

You get:

- Fast onboarding of QA engineers

- Flexible engagement based on your needs

- Seamless integration with your tools and processes

- Transparent tracking of work and results

QA testing services for AI-driven systems

We validate how your AI behaves in real operational conditions – with measurable evaluation, controlled execution, and consistent output quality.

RAG evaluation and response accuracy

Evaluation of how your AI retrieves and uses information. Each response is measured for context precision and faithfulness, ensuring outputs remain aligned with your data and business logic.

Prompt injection and adversarial testing

We test how your AI responds to complex and edge-case inputs. Structured red-teaming scenarios verify that the system follows defined access rules and maintains consistent behavior under varied conditions.

Reasoning consistency and drift monitoring

We continuously evaluate how your AI makes decisions over time. This maintains stable reasoning patterns and predictable outputs as models and data evolve.

Synthetic test data generation

We create controlled datasets that reflect real-world complexity. This enables large-scale testing without dependency on sensitive or production data, while maintaining high coverage across scenarios.

Agent workflow validation

This QA service is about testing AI systems that execute multi-step actions. Each step of the workflow is validated in controlled environments to ensure correct sequencing, accurate decisions, and reliable outcomes.

Full-spectrum QA strategy

We structure QA testing services as a system that combines human evaluation, automated validation, and AI-specific assessment. Each layer serves a defined role and operates together as a single, controlled process.

Human-in-the-loop validation

We apply manual testing where user behavior, interface logic, and real-world scenarios require evaluation beyond predefined scripts. Our QA engineers validate complete user flows, edge cases, and interaction consistency – ensuring the product behaves naturally in real usage.

Automated validation in CI/CD

We integrate automated testing into your delivery pipeline. Regression suites, API checks, and system validations run continuously – providing fast, consistent feedback across every build and release cycle.

AI and LLM evaluation

We implement evaluation pipelines for AI-driven functionality. Using frameworks such as RAGAS, we measure output quality across defined metrics, including context precision, reasoning consistency, and hallucination control. AI behavior is evaluated continuously and aligned with predefined acceptance thresholds.

Quality is defined by measurable standards

Quality is defined through clear metrics, controlled processes, and consistent execution. Every release is evaluated against agreed criteria, so decisions are based on data.

For traditional software systems

QA is structured around predictable delivery and transparent results. Each release is supported by a clear readiness assessment.

- Regression cycles are executed within defined timeframes, aligned with your release schedule.

- Test coverage is tracked and continuously expanded where it creates measurable value.

- Defects are prioritized, resolved, and verified through a controlled lifecycle.

- QA reports provide full visibility into system readiness at every stage.

For AI-driven systems

AI behavior is evaluated using structured and repeatable metrics. AI quality is managed as an operational parameter with visibility, control, and continuous evaluation.

- Context precision and output consistency are measured against defined thresholds.

- Model responses are evaluated for alignment with expected logic and domain rules.

- System behavior is continuously monitored through controlled evaluation pipelines.

- Deployment decisions are based on measurable performance.

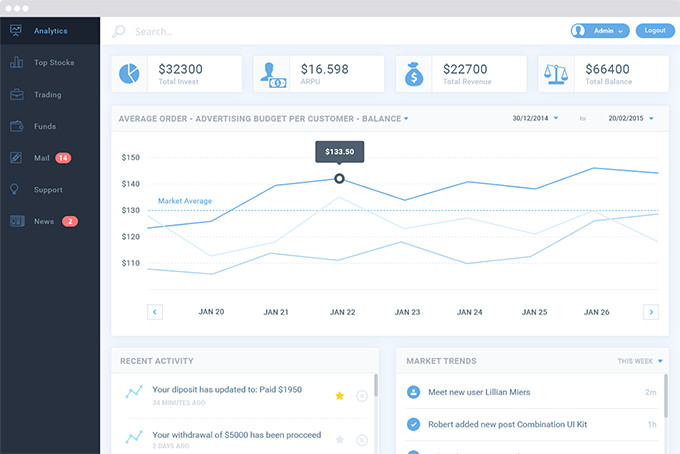

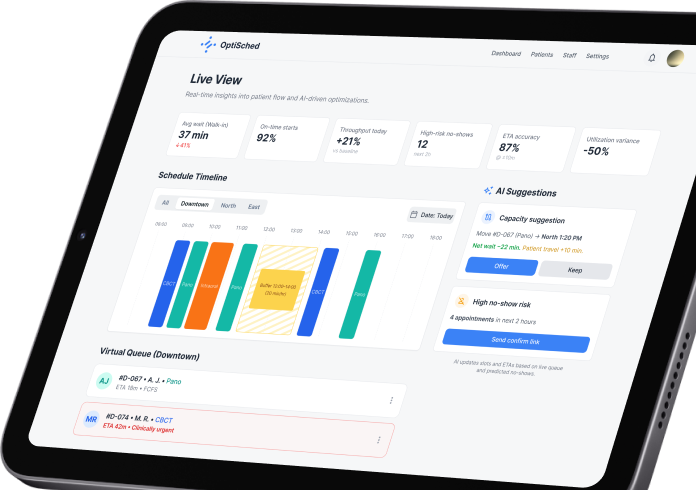

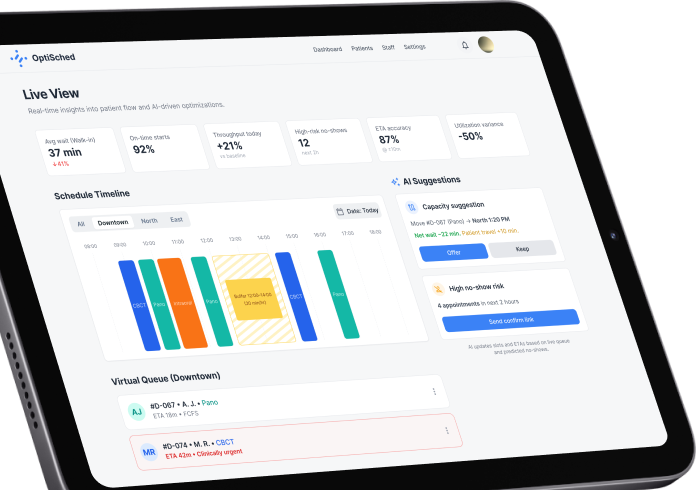

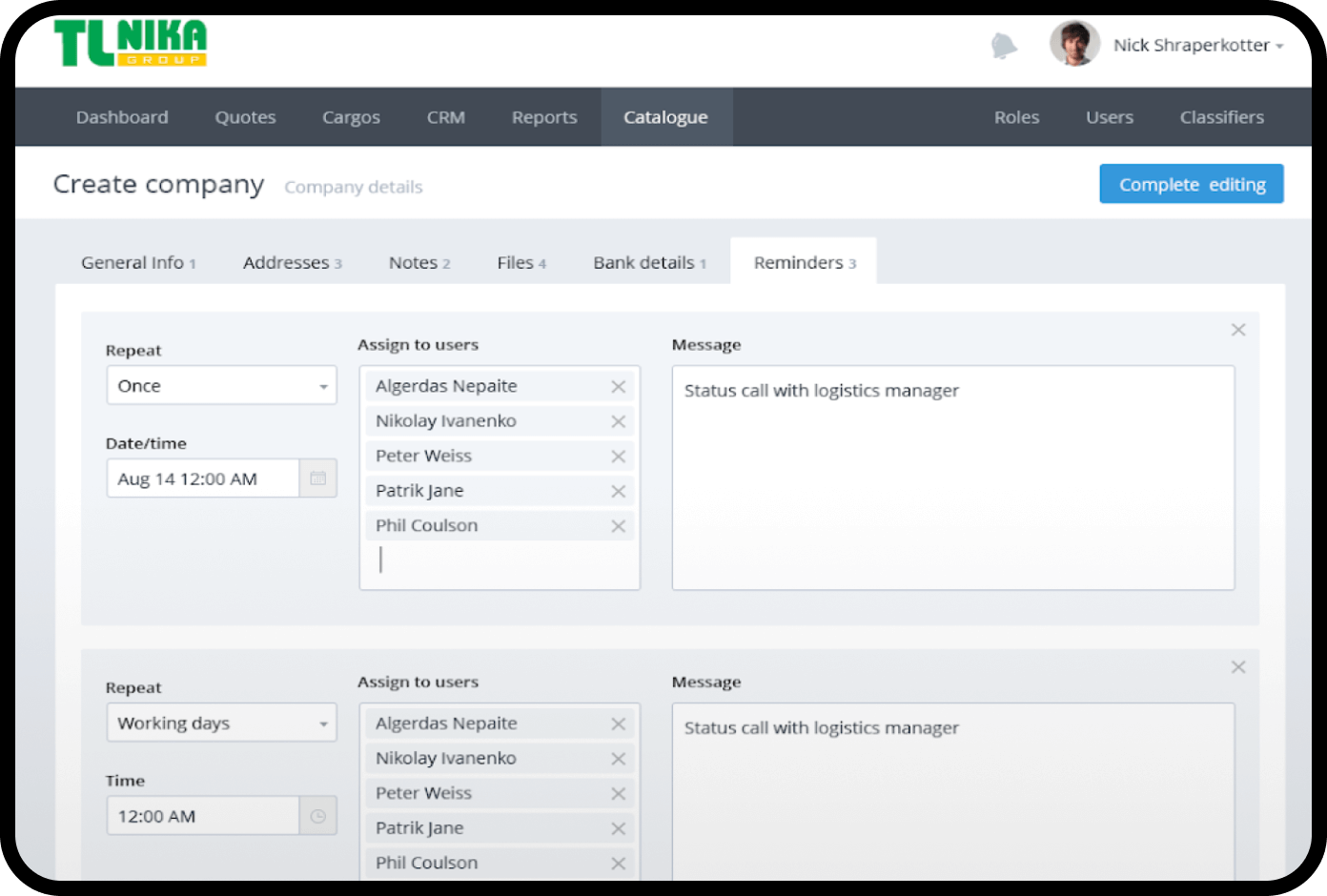

Expertise in business domains

We understand the unique challenges and requirements of each domain. Our QA engineers adapt test strategies to your industry’s context – for example, ensuring HIPAA compliance and data security in healthcare apps, or handling the complex transaction flows and PCI DSS standards in fintech software.

AdTech & Marketing

FinTech

Logistics

Healthcare

Media & Entertainment

Automotive

eCommerce & Retail

Travel & Hospitality

EdTech

Real Estate

Start Your QA Journey

Partner with experts for reliable, high-quality software.

What deliverables you receive

Structured QA outputs are delivered to give you full visibility into product quality and clear control over release readiness.

QA strategy document

Test coverage map

Test cases and automation suites

Bug reports with prioritization

QA dashboards and metrics

AI evaluation reports (for AI-driven systems)

Quality as a controlled business outcome

We design quality assurance as an operational model that supports how your product is built, released, and scaled.

- Every release is evaluated against clearly defined criteria.

- Test coverage, quality thresholds, and acceptance conditions are set upfront and applied consistently across development cycles.

- Your team always understands the current state of the product – what is validated, what is in progress, and what is ready for release.

Our QA approach creates a stable delivery process where quality is visible, measurable, and aligned with business goals.

- Predictable releases

- Clear visibility into product readiness

- Reduced operational overhead

- Confidence in every deployment

Manual testing tools

Automation testing tools

Talk to a QA Expert

Get personalized advice for your unique project needs.

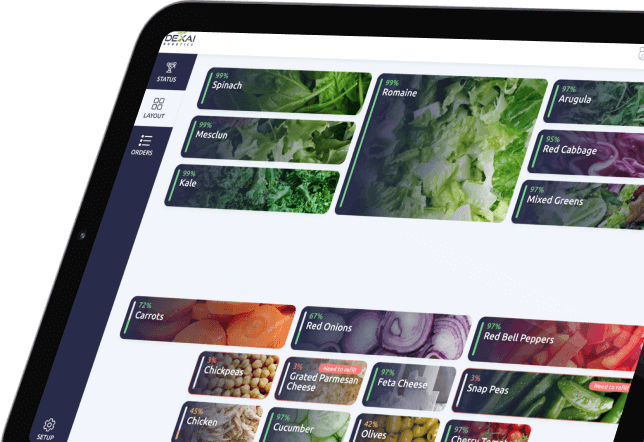

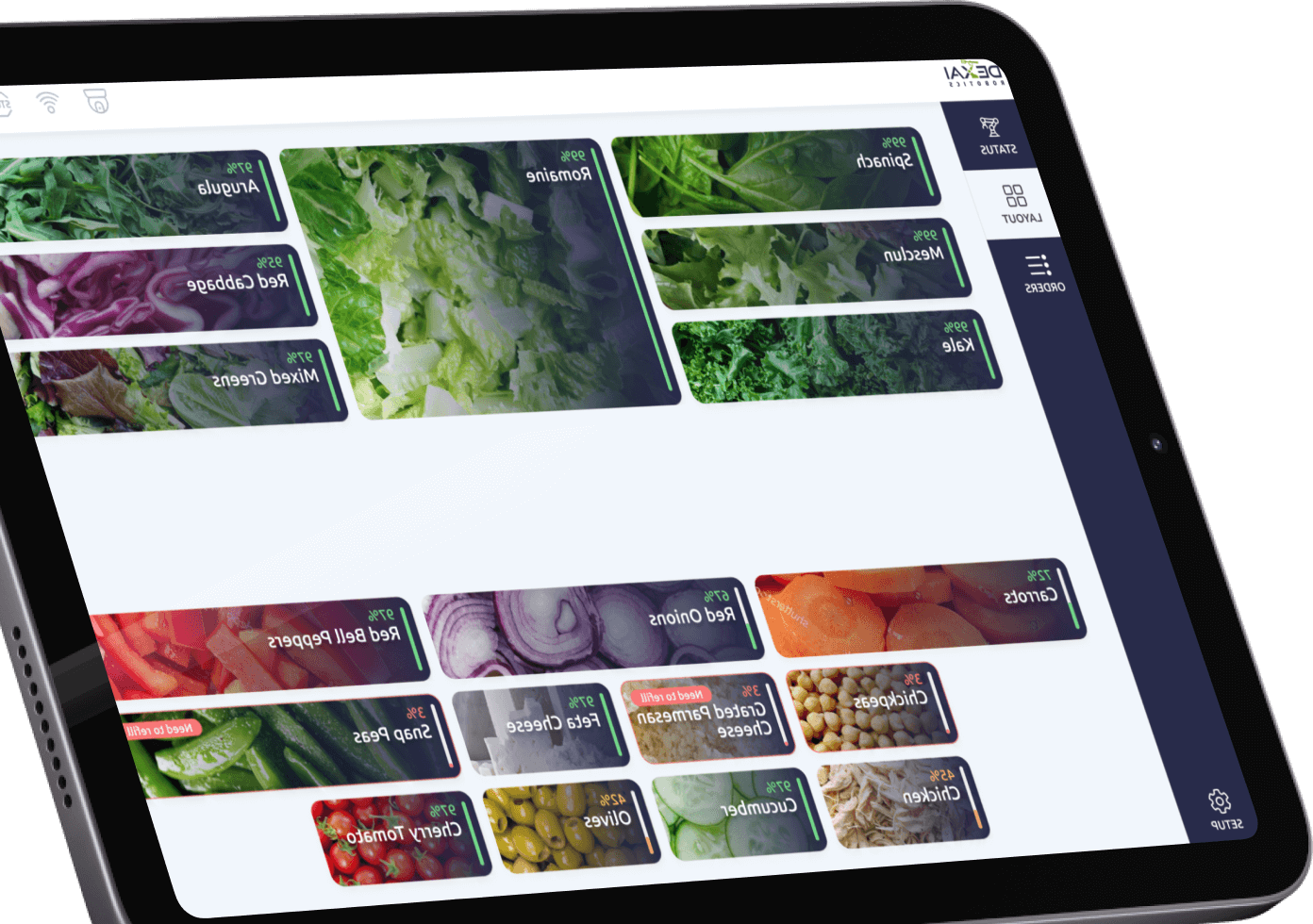

Our recent works

How your QA starts and scales

QA is integrated into your product and delivery process from the first step. Quality is defined, measured, and continuously improved within a single, consistent framework.

- The process begins with a structured evaluation of your product and QA maturity.

- Functionality, integrations, performance behavior, and existing testing practices are reviewed.

- For systems with data-driven or AI components, output consistency and evaluation approach are also assessed.

- You receive a clear view of current quality, test coverage, and areas that require attention.

A structured approach defines how quality is managed across your product.

This includes:

- Test scope and priorities

- Coverage levels across features and systems

- Testing approach across manual, automated, and evaluation-driven components

- Tools, environments, and reporting structure

The result is a QA strategy aligned with your product goals, architecture, and release model.

QA is integrated into your development workflow.

- Test environments are configured

- Test cases and automation are prepared

- QA is embedded into your CI/CD pipeline

- Evaluation flows are defined where output validation is required

- Communication and reporting are aligned with your team

QA becomes part of your delivery process and scales with it.

Continuous testing runs aligned with your release cycles. You see progress, quality levels, and release readiness at any moment. What it means in practice:

- Test cycles follow defined coverage and priorities

- Defects are documented, prioritized, and tracked

- System behavior and output consistency are continuously validated, where applicable

- Results are structured into clear, decision-ready reports

QA improves as your product evolves:

- Test coverage expands where it brings value

- Automation increases where it improves efficiency

- Evaluation approaches are refined for systems that rely on dynamic outputs

- Processes are adjusted based on real project data

Awards & Recognitions

Frequently asked questions

How do you perform QA on a RAG (Retrieval-Augmented Generation) system?

Algorithmic evaluation frameworks such as RAGAS or TruLens are integrated into your CI/CD pipeline. The AI is scored on retrieval precision and faithfulness.

How do you perform AI red-teaming and why is it part of QA?

If your app has an open text box connected to an LLM, attackers will try to manipulate it. Red-teaming actively tests the AI through adversarial scenarios such as prompt injections and jailbreaks. This ensures the system does not bypass role-based access controls (RBAC) or expose personally identifiable information (PII).

How do you test for reasoning drift in custom LLMs?

Continuous LLMOps monitoring is implemented. Shadow tests are deployed in production to continuously sample outputs and alert your engineering team when the model begins to hallucinate or deviate from its core system prompt.

How do you test autonomous AI agents that execute multi-step actions, like updating a CRM or sending emails?

Agentic AI is tested through blast radius containment. The agent’s chain of thought is validated in secure, containerized sandbox environments with mocked APIs and cloned databases. Automated assertions verify correct logical execution and adherence to human-in-the-loop (HITL) approval gates.

How do you load-test an application that relies on external LLM APIs (like OpenAI) without spending a fortune on API tokens?

Semantic API mocks are engineered for performance testing. Thousands of concurrent users are simulated while LLM calls are intercepted and replaced with pre-calculated responses at realistic latency. This isolates and stress-tests core infrastructure without unnecessary API costs.

More about SumatoSoft services

Let’s start

If you have any questions, email us info@sumatosoft.com