MVP software development for AI products & core business

Build the first release on the architecture you can keep. SumatoSoft helps SaaS teams, enterprise product groups, and founders launch MVPs that test demand, prove technical fit, and set up the next phase without demo-only shortcuts.

Our MVP development services scope

An MVP should validate the core workflow, demonstrate that the architecture can support the product, and outline the next release. Our MVP software development services cover product discovery, UX/UI design, backend and frontend engineering, cloud setup, QA, launch support, and post-launch iteration planning.

When AI is part of the product, we add the work that many MVP vendors leave out:

- Data audit and source mapping

- Retrieval and permission design

- Model selection and routing logic

- Token and infrastructure cost modeling

- Evaluation rules, abuse testing, and output review paths

Why leaders build MVPs first

An MVP is a way to reduce risk before the product absorbs more budget, more integrations, and more operational exposure.

Technical risk

For AI products, the first question is whether the model can perform reliably in your actual environment. Before you invest in a larger build, an MVP shows whether the system can work with your data, your workflows, and your quality threshold.

Market risk

An MVP puts the core use case in front of real users before the team spends time on secondary features. That early release helps validate demand, expose weak assumptions, and show what deserves further investment.

Security risk

For enterprise MVPs, security cannot wait until a later phase. The first release should establish the access model, environment boundaries, and data-handling rules from the start. When AI is involved, that includes role-based access, controlled retrieval, and an isolated deployment path where needed.

Your idea deserves more than a pitch deck!

Turn it into a working MVP with our expert dev team.

How we build an MVP

The path depends on the product. Standard software and AI-backed software should not be handled in the same way.

Using business analysis for MVP scoping, we define the use case, user roles, workflows, success metrics, integrations, release scope, and hosting constraints. The output is a scoped first release, UI/UX design for MVP and an architecture direction.

Typical duration: 2 to 4 weeks

This phase applies when AI is central to the product or carries material delivery risk.

Traditional software can move from discovery into build. AI products usually should not. Before we commit to the public MVP, we test the model on a bounded slice of real or sanitized data, estimate operating cost, define permissions, and set evaluation rules (we call it AI Proof of Concept (PoC)).

The output is a tighter build scope, a go/no-go view on the AI approach, and a safer delivery path.

Typical duration: 2 to 4 weeks

We lock the release scope, development environments, repo structure, integration plan, QA approach, rollout path, and reporting cadence. For AI products, we also define observability, abuse testing, and evaluation checkpoints.

Typical duration: 1 to 2 weeks

We design and build the product, connect integrations, prepare the release environment, and test throughout the build. For AI products, this phase includes retrieval setup, model integration, prompt controls, tracing, and feedback mechanisms inside the UI.

Typical duration: 8 to 12 weeks, depending on scope

After a thorough QA and testing, we ship the MVP, observe how it performs, fix what the first users expose, and define the next release based on usage data, support signals, and business goals.

90-day AI vs traditional MVP pipeline

Traditional MVPs can ship faster than this. AI-backed products often need a wider path because the data and evaluation layer must be built alongside the app.

| Timeline | Traditional MVP | AI-backed MVP |

|---|---|---|

Days 1–14 |

Discovery, user flows, release scope, architecture outline |

Discovery plus data audit, retrieval feasibility, model choice, token-cost testing, and guardrails |

Days 15–45 |

UX/UI, frontend and backend foundation, primary integrations, environments |

App foundation plus data cleanup, chunking, vector index, permission mapping, and pipeline setup |

Days 46–75 |

Feature build, QA, and release prep |

Model integration, prompt design, streaming UX, eval datasets, tracing, and user feedback hooks |

Days 76–90 |

UAT, hardening, release |

Red-team tests, prompt-injection testing, AI evals, rollout hardening, and release |

Days 1–14

Days 15–45

Days 46–75

Days 76–90

Discovery, user flows, release scope, architecture outline

UX/UI, frontend and backend foundation, primary integrations, environments

Feature build, QA, and release prep

UAT, hardening, release

Discovery plus data audit, retrieval feasibility, model choice, token-cost testing, and guardrails

App foundation plus data cleanup, chunking, vector index, permission mapping, and pipeline setup

Model integration, prompt design, streaming UX, eval datasets, tracing, and user feedback hooks

Red-team tests, prompt-injection testing, AI evals, rollout hardening, and release

MVP deliverables we prepare

Collaboration with us means full transparency in the way work is done. One of the key aspects is the tangible deliverables of our work produced at different stages during our collaboration.

Product Strategy & Planning

- validated product concept and user needs analysis;

- lean canvas or business model overview;

- feature roadmap and MVP scope definition;

- cost and timeline estimation;

- regular detailed reports about project health and status;

- risk assessment and mitigation analysis;

- product limitation document.

Design

- wireframes, mockups, and clickable prototypes;

- development-ready UI/UX designs;

- UI-kit to simplify the development process;

- style-guides.

Engineering

- technical architecture and tech stack recommendation;

- calable backend and API;

- secure and optimized infrastructure setup;

- fully functional MVP ready for deployment.

Quality & Growth Readiness

- QA reports and test documentation;

- test cases for test automation;

- post-launch performance metrics and next-step recommendations.

We build a product, not a thin model wrapper

A stronger AI MVP is not defined solely by the model. It is defined by how your product handles data, permissions, context, workflows, and user outcomes.

Got a vision? Let’s build its first proof!

Book a free strategy call and get expert feedback on your MVP scope.

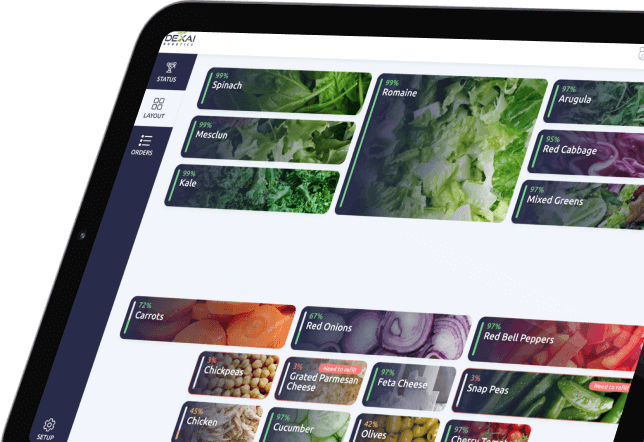

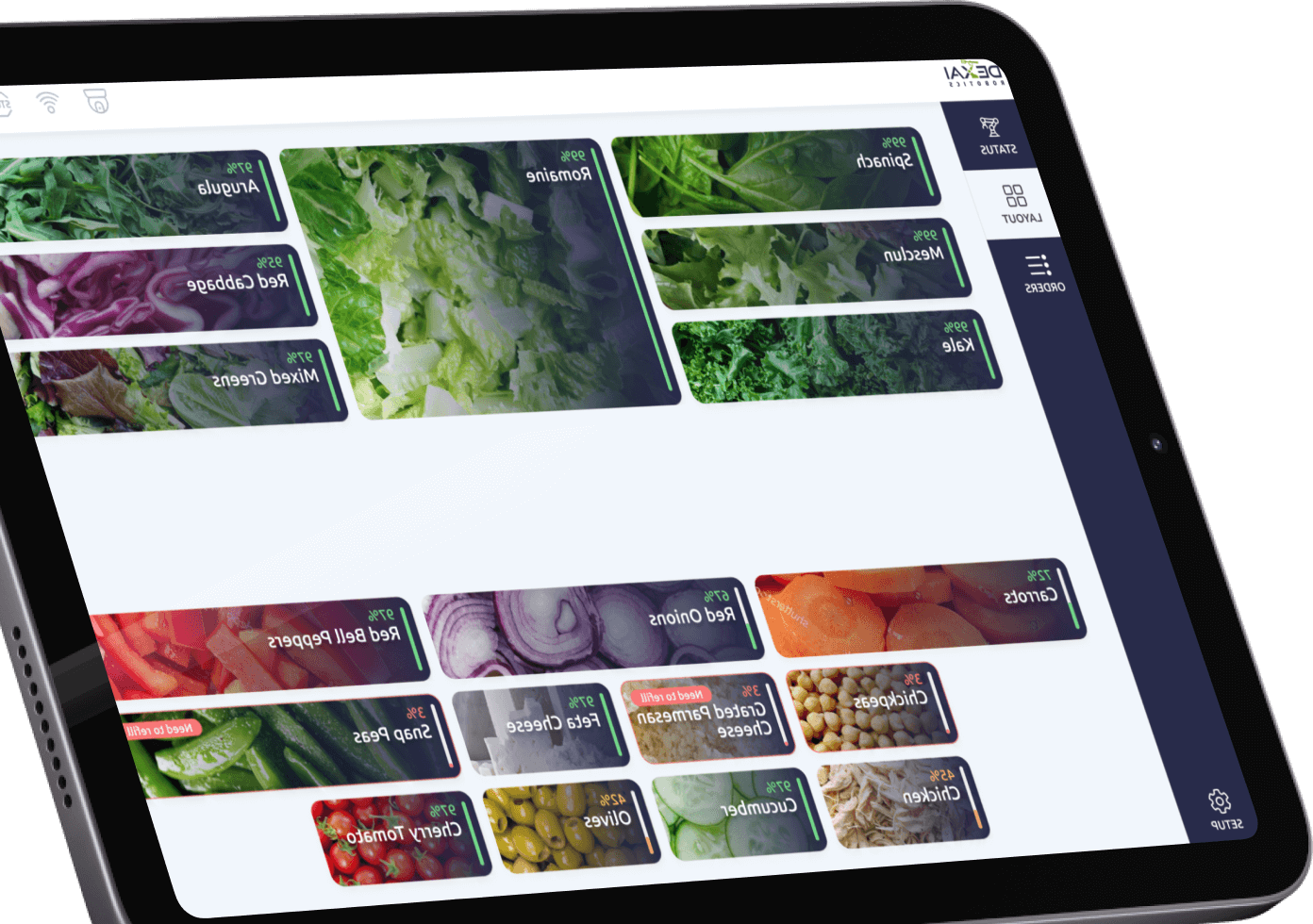

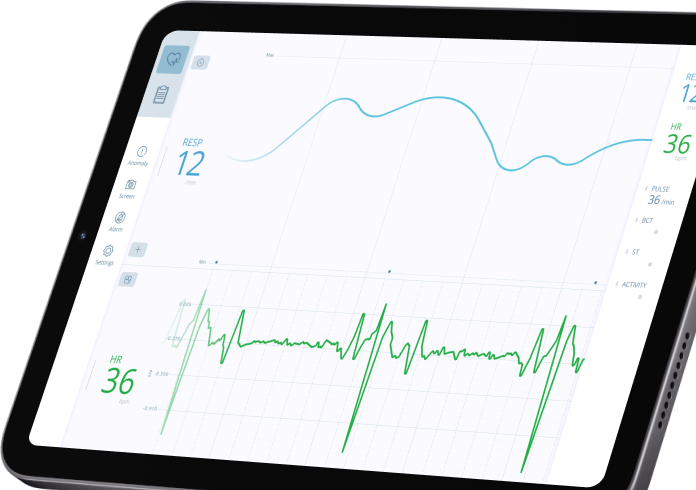

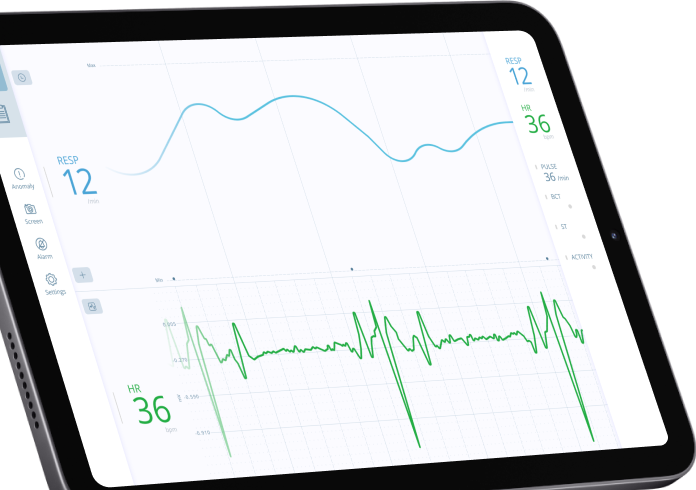

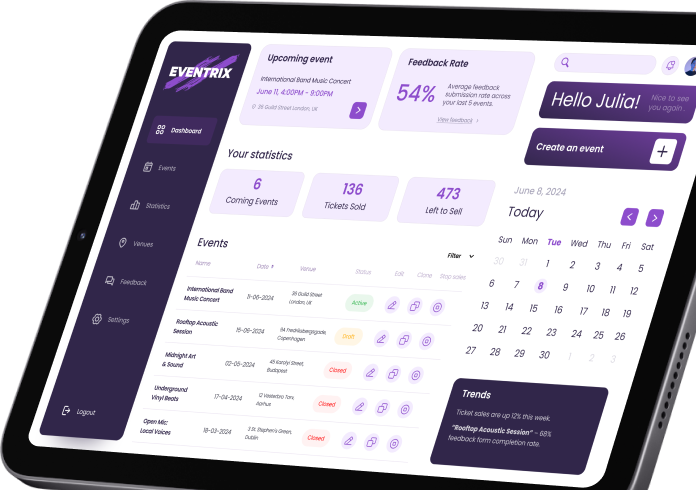

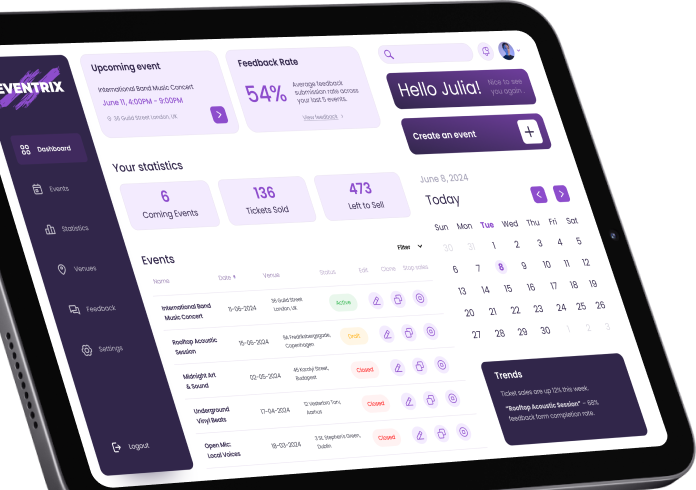

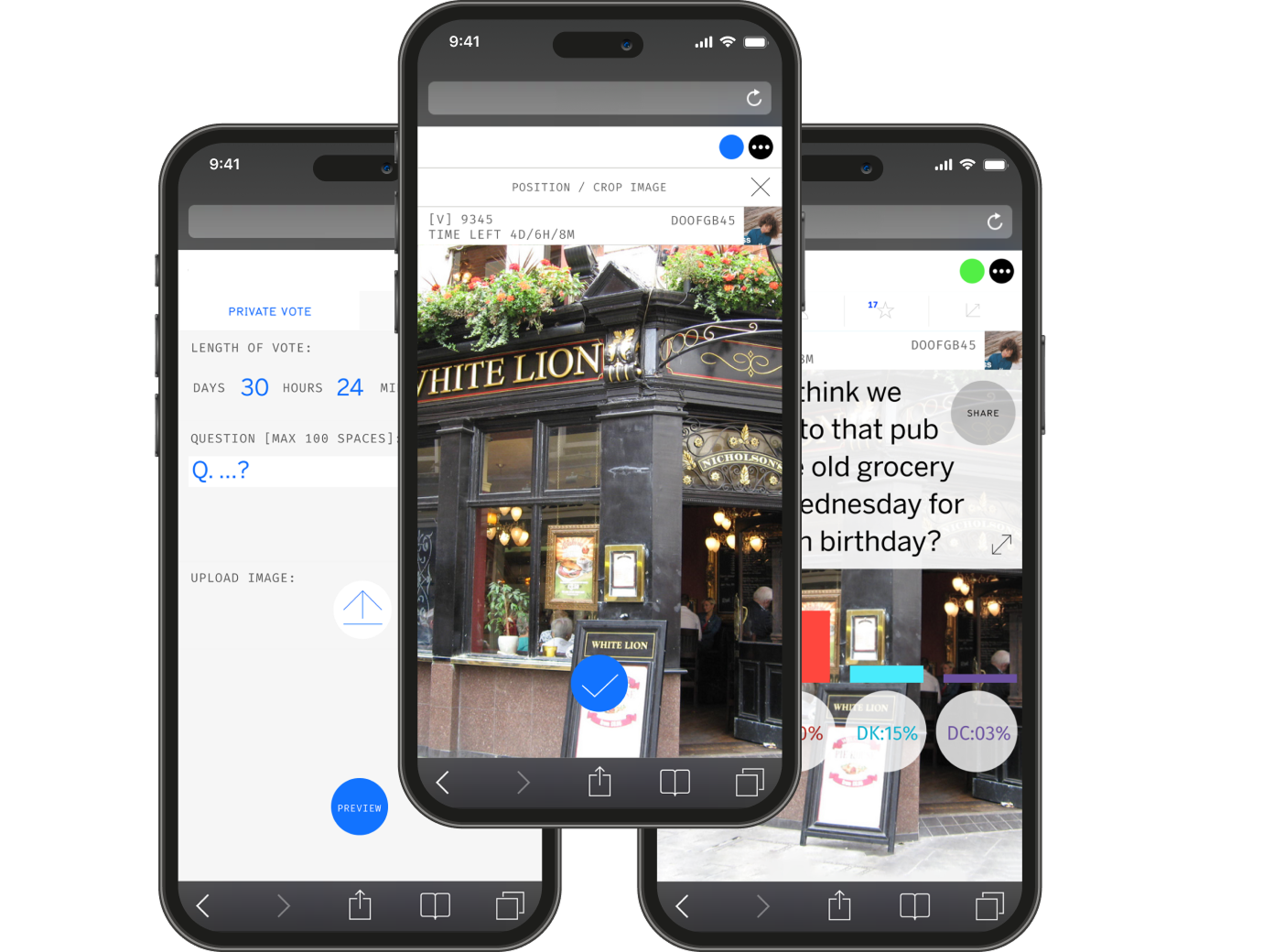

Our recent works

Core tech stack we work with

Idea-stage? We turn that into clickable reality.

Start with a free discovery consultation.

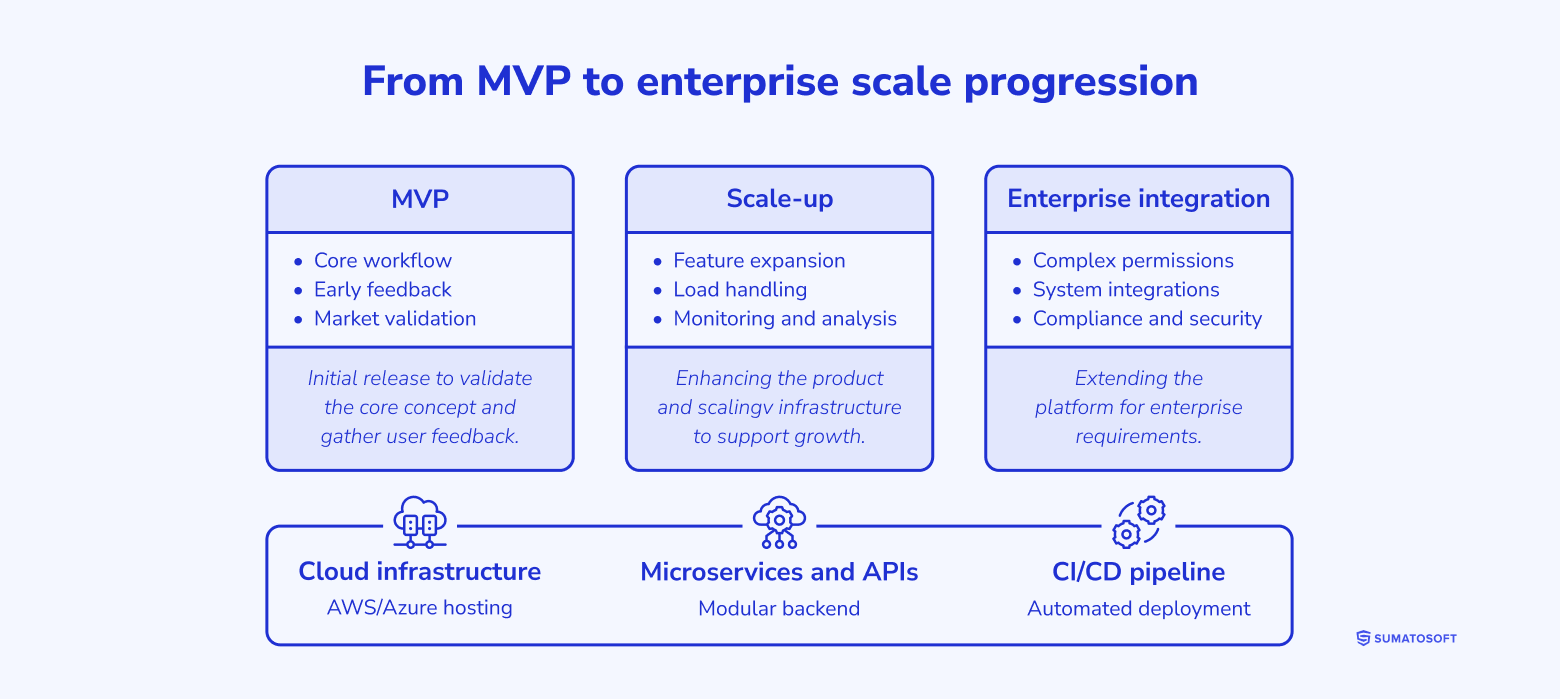

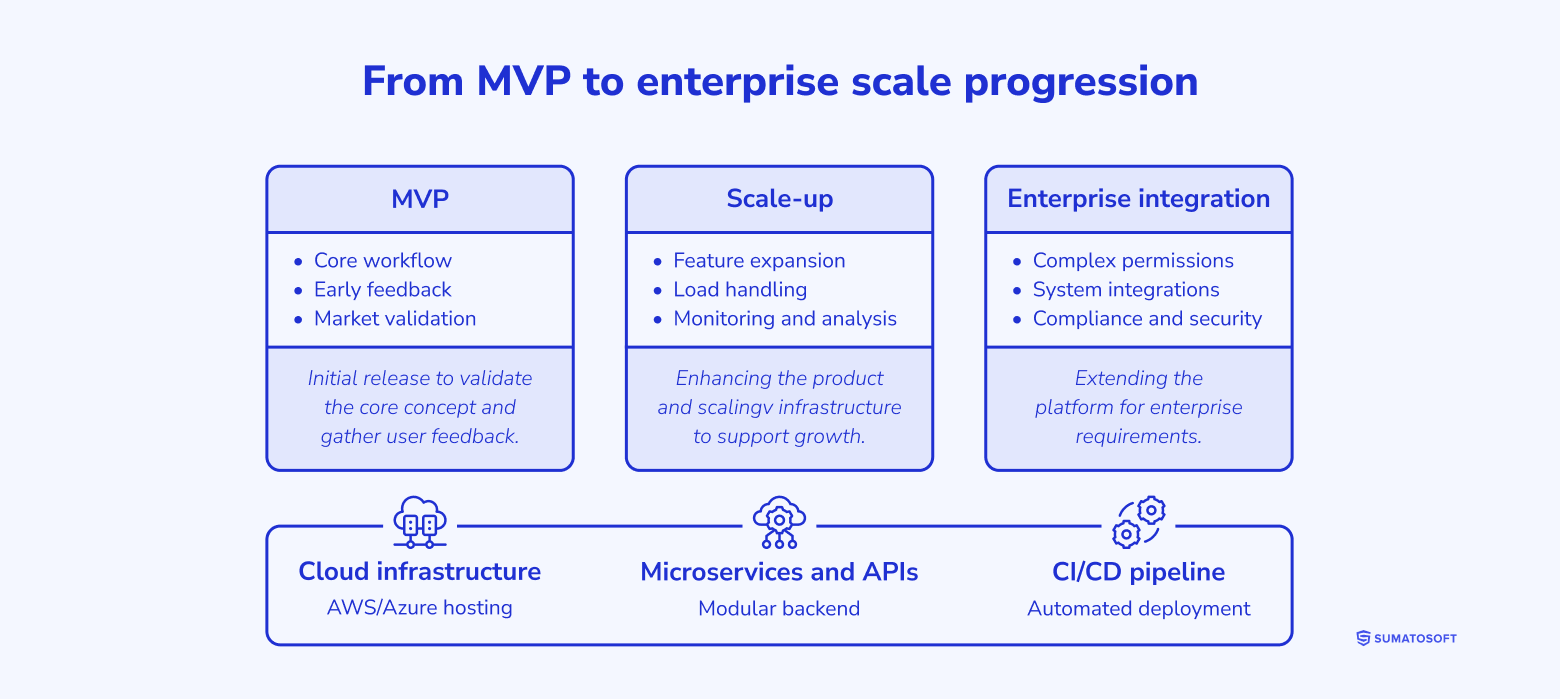

From MVP to enterprise scale

Buyers often worry that an MVP is only a temporary build and that real growth will require a rewrite. We avoid that problem by engineering the MVP on a production-ready foundation from day one.

We use scalable cloud infrastructure, structured service architecture, stable APIs, and CI/CD so the product can grow without being rebuilt.

Why entrust MVP development to us

Since 2012, we know software development for startups inside out. So, we adjust our MVP software development services to provide everything needed to develop your MVP application, from building a Lean Canvas to the release of a fully functioning MVP.

- You own the IP and source code

The MVP is your asset. The value should not sit in a vendor-controlled wrapper, internal platform, or hidden delivery shortcut.

- The product is built on real infrastructurep

We use delivery environments and cloud architecture that support growth.

- AI guardrails are part of the build

If AI is in scope, the product ships with defined data access rules, evaluation checkpoints, logging, and abuse testing.

- One team covers product engineering and AI delivery

You do not need one vendor for the app and another for the model layer. We handle the standard product stack and AI-specific work as a single delivery path.

Awards & recognitions

Let’s start

If you have any questions, email us info@sumatosoft.com

FAQ

What is the difference between a PoC and an MVP for an AI product?

A PoC answers the technical question: Can this model or retrieval setup do the job inside your data and workflow constraints? An MVP answers the market and product question: will users adopt and pay for this workflow once it is packaged as software?

How do you estimate AI operating costs before launch?

We test model usage on a bounded dataset, estimate token and retrieval patterns, and decide where caching, routing, or smaller models should sit. The result is an operating-cost model based on the intended workflow, not guesswork.

Can you build an AI MVP with HIPAA-aligned handling or SOC 2-ready controls?

Yes. We can design the MVP around private hosting options, role-based access, logging, retention rules, tenant separation, and stricter model-provider terms. Formal audits and attestations still happen outside the product build itself.

How do you test an AI MVP if outputs vary?

We combine normal QA with dataset-based AI evaluation. The system is scored on retrieval quality, faithfulness, latency, refusal behavior, and failure modes. We also test prompt injection and other abuse scenarios before release.

How do you avoid building a thin wrapper with no moat?

By putting your value in the product logic, the data flow, the retrieval layer, the permission model, and the workflow.